Follow through this post to learn how to deploy Ceph storage cluster on Debian 12. Ceph is a scalable distributed storage system designed for cloud infrastructure and web-scale object storage. It can also be used to provide Ceph Block Storage as well as Ceph File System storage.

As of this blog post update, CEPH Reef is the current stable release.

Table of Contents

Deploying Ceph Storage Cluster on Debian 12

The Ceph Storage Cluster Daemons

Ceph Storage Cluster is made up of different daemons eas performing specific role.

- Ceph Object Storage Daemon (OSD,

ceph-osd)- It provides ceph object data store.

- It also performs data replication , data recovery, rebalancing and provides storage information to Ceph Monitor.

- At least an OSD is required per storage device.

- Ceph Monitor (

ceph-mon)- It maintains maps of the entire Ceph cluster state including monitor map, manager map, the OSD map, and the CRUSH map.

- manages authentication between daemons and clients.

- A Ceph cluster must contain a minimum of three running monitors in order to be both redundant and highly-available. If there are at least five nodes on the cluster, it is recommended to run five monitors in the cluster.

- Ceph Manager (

ceph-mgr)- keeps track of runtime metrics and the current state of the Ceph cluster, including storage utilization, current performance metrics, and system load.

- manages and exposes Ceph cluster web dashboard and API.

- At least two managers are required for HA.

- Ceph Metadata Server (MDS):

- Manages metadata for the Ceph File System (CephFS). Coordinates metadata access and ensures consistency across clients.

- One or more, depending on the requirements of the CephFS.

- RADOS Gateway (RGW):

- Also called “Ceph Object Gateway”

- is a component of the Ceph storage system that provides object storage services with a RESTful interface. RGW allows applications and users to interact with Ceph storage using industry-standard APIs, such as the S3 (Simple Storage Service) API (compatible with Amazon S3) and the Swift API (compatible with OpenStack Swift).

Ceph Storage Cluster Deployment Methods

There are different methods you can use to deploy Ceph storage cluster.

cephadmleverages container technology (specifically, Docker containers) to deploy and manage Ceph services on a cluster of machines.Rookdeploys and manages Ceph clusters running in Kubernetes, while also enabling management of storage resources and provisioning via Kubernetes APIs.ceph-ansibledeploys and manages Ceph clusters using Ansible.- ceph-salt installs Ceph using Salt and cephadm.

- jaas.ai/ceph-mon installs Ceph using Juju.

- Installs Ceph via Puppet.

- Ceph can also be installed manually.

Use of cephadm and rooks are the recommended methods for deploying Ceph storage cluster.

Ceph Depolyment Requirements

Depending on the deployment method you choose, there are different requirements for deploying Ceph storage cluster

In this tutorial, we will use cephadm to deploy Ceph storage cluster.

Below are the requirements for deploying Ceph storage cluster via cephadm;

- Python 3

- Systemd

- Podman or Docker for running containers (we use docker in this setup)

- Time synchronization (such as chrony or NTP)

- LVM2 for provisioning storage devices. You can still use raw disks or raw paritions WITHOUT filesystem if you want.

All the required dependencies are installed automatically by the bootstrap process.

Prepare Ceph Nodes for Ceph Storage Cluster Deployment on Debian 12

Our Ceph Storage Cluster Deployment Architecture

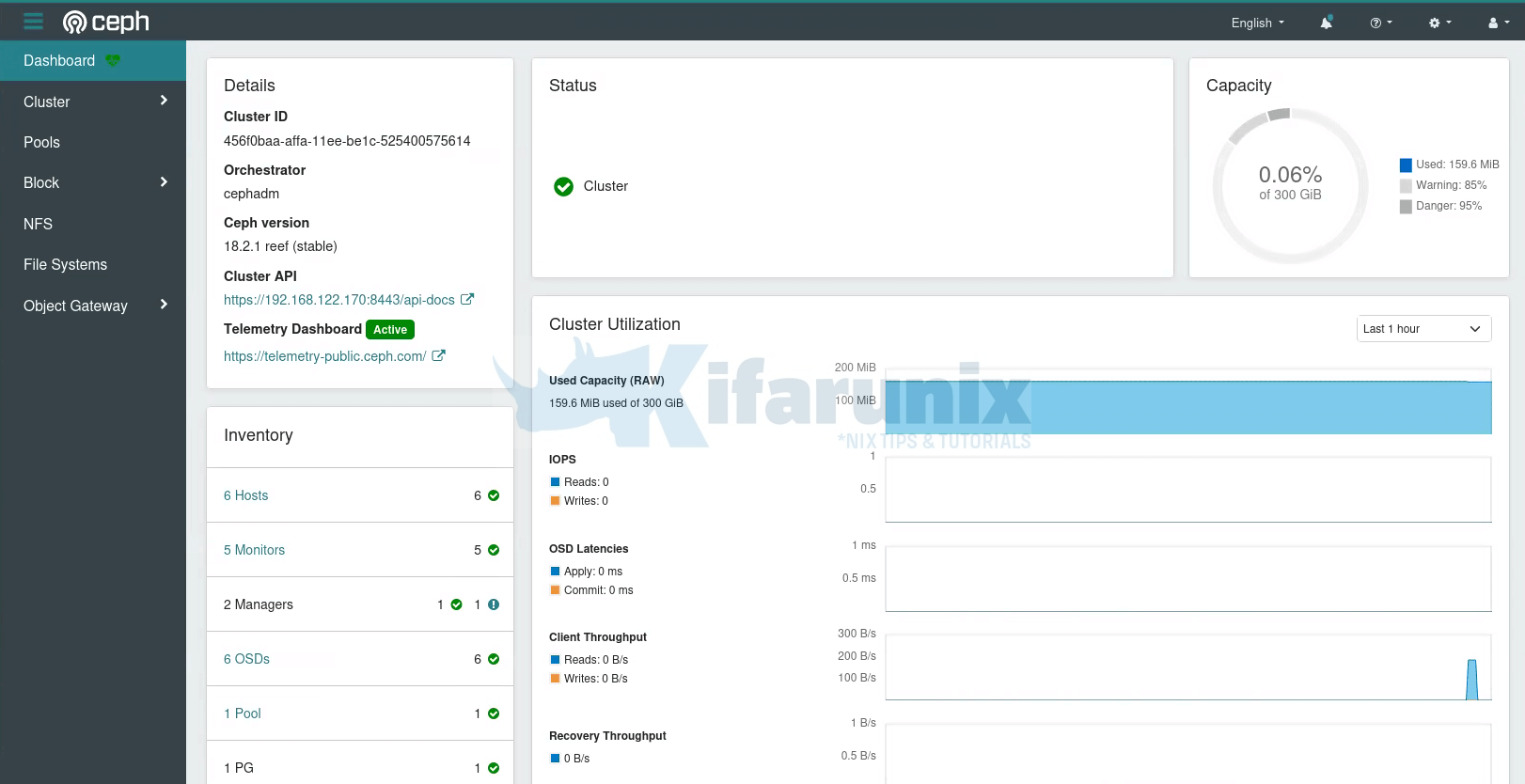

The diagram below depicts our ceph storage cluster deployment architecture. In a typical production environment, you would have at least 3 monitor nodes as well as at least 3 OSDs.

If your cluster nodes are in the same network subnet, cephadm will automatically add up to five monitors to the subnet, as new hosts are added to the cluster.

Ceph Storage Nodes Hardware Requirements

Check the hardware recommendations page for the Ceph storage cluster nodes hardware requirements.

Create Ceph Deployment User Account

We will be deploying Ceph on Debian 12 using a root user account. So to follow along, ensure you have access to the root account on your Ceph cluster nodes. Bear in mind that root user account is a superuser account, hence, “With great power comes great responsibility”.

whoamirootIf you would like to use non root user account to bootstrap Ceph cluster, read more on the here.

Attach Storage Disks to Ceph OSD Nodes

Each Ceph OSD nodes in our architecture above has unallocated 3 raw disks, /dev/vd{a,b} of 50 GB each.

lsblkSample output;

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

sda 8:0 0 100G 0 disk

├─sda1 8:1 0 98G 0 part /

├─sda2 8:2 0 1K 0 part

└─sda5 8:5 0 2G 0 part

vda 253:0 0 50G 0 disk

vdb 253:16 0 50G 0 disk

Set Hostnames and Update Hosts File

To begin with, setup up your nodes hostnames;

hostnamectl set-hostname ceph-mgr-mon01Set the respective hostnames on other nodes.

If you are not using DNS for name resolution, then update the hosts file accordingly.

For example, in our setup, each node hosts file should contain the lines below;

less /etc/hosts...

192.168.122.170 ceph-mgr-mon01

192.168.122.127 ceph-mon02

192.168.122.184 ceph-mon03

192.168.122.188 ceph-osd01

192.168.122.30 ceph-osd02

192.168.122.51 ceph-osd03

Set Time Synchronization

Ensure that the time on all the nodes is synchronized. Thus install Chrony on each and set it up such that all nodes uses the same NTP server.

apt install chrony -yEdit the Chrony configuration and set your NTP server by replacing the NTP server pools with your NTP server address.

vim /etc/chrony/chrony.confDefine your NTP Server. Replace ntp.kifarunix-demo.com with your respective NTP server address.

...

#pool 2.debian.pool.ntp.org iburst

pool ntp.kifarunix-demo.com iburst

...

Restart Chronyd

systemctl restart chronyInstall SSH Server on Each Node

Ceph deployment through cephadm utility requires that an SSH server is installed on all the nodes.

Debian 12 comes with SSH server already installed. If not, install and start it as follows;

apt install openssh-serversystemctl enable --now sshEnable Root Login on Other Nodes from Ceph Admin Node

In order to add other nodes to the Ceph cluster using Ceph Admin Node, you will have to use the root user account.

Thus, on the Ceph Monitor, Ceph OSD nodes, enable root login from the Ceph Admin node;

vim /etc/ssh/sshd_configAdd the configs below, replacing the IP address for Ceph Admin accordingly.

Match Address 192.168.122.170

PermitRootLogin yesReload ssh;

systemctl reload sshdInstall Python3

Python is required to deploy Ceph. Python 3 is installed by default on Debian 12;

python3 -VPython 3.11.2Install Docker CE on Each Node

The cephadm utility is used to bootstrap a Ceph cluster and to manage ceph daemons deployed with systemd and Docker containers.

It can also use Podman, which will be installed along with other Ceph packages as can be seen in the later stages of this guide.

To install Docker CE on each Ceph cluster node, follow the guide below;

How to Install Docker CE on Debian 12

Install LVM Package on each Node

Ceph requires LVM2 for provisioning storage devices. Install the package on each node.

apt install lvm2 -ySetup Ceph Storage Cluster on Debian 12

Install cephadm Utility on Ceph Admin Node

On the Ceph admin node, you need to install the cephadm utility.

Cephadm installs and manages a Ceph cluster using containers and systemd, with tight integration with the CLI and dashboard GUI.

- cephadm only supports Octopus and newer releases.

- cephadm is fully integrated with the new orchestration API and fully supports the new CLI and dashboard features to manage cluster deployment.

- cephadm requires container support (podman or docker) and Python 3.

If you check the cephadm utility provided by the default repos, it is a lower version. The current version as of this writing is 18.2.0.

apt-cache policy cephadmcephadm:

Installed: (none)

Candidate: 16.2.11+ds-2

Version table:

16.2.11+ds-2 500

500 http://deb.debian.org/debian bookworm/main amd64 Packages

To install the current cephadm release version, you need the current Ceph release repos installed.

To install Ceph release repos on Debian 12, run the commands below

wget -q -O- 'https://download.ceph.com/keys/release.asc' | \

gpg --dearmor -o /etc/apt/trusted.gpg.d/cephadm.gpgecho deb https://download.ceph.com/debian-reef/ $(lsb_release -sc) main \

> /etc/apt/sources.list.d/ceph.listapt updateThen, check the available version of cephadm package now.

apt-cache policy cephadmcephadm:

Installed: (none)

Candidate: 18.2.1-1~bpo12+1

Version table:

18.2.1-1~bpo12+1 500

500 https://download.ceph.com/debian-reef bookworm/main amd64 Packages

16.2.11+ds-2 500

500 http://deb.debian.org/debian bookworm/main amd64 Packages

As you can see, the Ceph repo provides current release version of cephadm package. Thus, install it as follows;

apt install cephadmDuring the installation, you may see some errors relating to the cephadm user account that is being created. Since we are using root user account to bootstrap our Ceph cluster, then we ignore this error.

Initialize Ceph Cluster Monitor On Ceph Admin Node

Your nodes are now ready to deploy a Ceph storage cluster.

It is now time to bootstrap the Ceph cluster in order to create the first Ceph monitor daemon on Ceph admin node. Thus, run the command below, substituting the IP address with that of the Ceph admin node accordingly.

cephadm bootstrap --mon-ip 192.168.122.170Creating directory /etc/ceph for ceph.conf

Verifying podman|docker is present...

Verifying lvm2 is present...

Verifying time synchronization is in place...

Unit chrony.service is enabled and running

Repeating the final host check...

docker (/usr/bin/docker) is present

systemctl is present

lvcreate is present

Unit chrony.service is enabled and running

Host looks OK

Cluster fsid: 456f0baa-affa-11ee-be1c-525400575614

Verifying IP 192.168.122.170 port 3300 ...

Verifying IP 192.168.122.170 port 6789 ...

Mon IP `192.168.122.170` is in CIDR network `192.168.122.0/24`

Mon IP `192.168.122.170` is in CIDR network `192.168.122.0/24`

Internal network (--cluster-network) has not been provided, OSD replication will default to the public_network

Pulling container image quay.io/ceph/ceph:v18...

Ceph version: ceph version 18.2.1 (7fe91d5d5842e04be3b4f514d6dd990c54b29c76) reef (stable)

Extracting ceph user uid/gid from container image...

Creating initial keys...

Creating initial monmap...

Creating mon...

Waiting for mon to start...

Waiting for mon...

mon is available

Assimilating anything we can from ceph.conf...

Generating new minimal ceph.conf...

Restarting the monitor...

Setting public_network to 192.168.122.0/24 in mon config section

Wrote config to /etc/ceph/ceph.conf

Wrote keyring to /etc/ceph/ceph.client.admin.keyring

Creating mgr...

Verifying port 0.0.0.0:9283 ...

Verifying port 0.0.0.0:8765 ...

Verifying port 0.0.0.0:8443 ...

Waiting for mgr to start...

Waiting for mgr...

mgr not available, waiting (1/15)...

mgr not available, waiting (2/15)...

mgr not available, waiting (3/15)...

mgr is available

Enabling cephadm module...

Waiting for the mgr to restart...

Waiting for mgr epoch 5...

mgr epoch 5 is available

Setting orchestrator backend to cephadm...

Generating ssh key...

Wrote public SSH key to /etc/ceph/ceph.pub

Adding key to root@localhost authorized_keys...

Adding host ceph-mgr-mon01...

Deploying mon service with default placement...

Deploying mgr service with default placement...

Deploying crash service with default placement...

Deploying ceph-exporter service with default placement...

Deploying prometheus service with default placement...

Deploying grafana service with default placement...

Deploying node-exporter service with default placement...

Deploying alertmanager service with default placement...

Enabling the dashboard module...

Waiting for the mgr to restart...

Waiting for mgr epoch 9...

mgr epoch 9 is available

Generating a dashboard self-signed certificate...

Creating initial admin user...

Fetching dashboard port number...

Ceph Dashboard is now available at:

URL: https://ceph-mgr-mon01:8443/

User: admin

Password: 0lquv02zaw

Enabling client.admin keyring and conf on hosts with "admin" label

Saving cluster configuration to /var/lib/ceph/456f0baa-affa-11ee-be1c-525400575614/config directory

Enabling autotune for osd_memory_target

You can access the Ceph CLI as following in case of multi-cluster or non-default config:

sudo /usr/sbin/cephadm shell --fsid 456f0baa-affa-11ee-be1c-525400575614 -c /etc/ceph/ceph.conf -k /etc/ceph/ceph.client.admin.keyring

Or, if you are only running a single cluster on this host:

sudo /usr/sbin/cephadm shell

Please consider enabling telemetry to help improve Ceph:

ceph telemetry on

For more information see:

https://docs.ceph.com/en/latest/mgr/telemetry/

Bootstrap complete.

According to the documentation, the bootstrap command;

- Create a monitor and manager daemon for the new cluster on the localhost.

- Generate a new SSH key for the Ceph cluster and add it to the root user’s

/root/.ssh/authorized_keysfile. - Write a copy of the public key to

/etc/ceph/ceph.pub. - Write a minimal configuration file to

/etc/ceph/ceph.conf. This file is needed to communicate with the new cluster. - Write a copy of the

client.adminadministrative (privileged!) secret key to/etc/ceph/ceph.client.admin.keyring. - Add the

_adminlabel to the bootstrap host. By default, any host with this label will (also) get a copy of/etc/ceph/ceph.confand/etc/ceph/ceph.client.admin.keyring.

Enable Ceph CLI

When bootstrap command completes, a command for accessing Ceph CLI is provided. Execute that command to access Ceph CLI, in case of multi-cluster or non-default config:

sudo /usr/sbin/cephadm shell \

--fsid 456f0baa-affa-11ee-be1c-525400575614 \

-c /etc/ceph/ceph.conf \

-k /etc/ceph/ceph.client.admin.keyring

Otherwise, for the default config, just execute;

sudo cephadm shellThis drops you onto Ceph CLI. You should see your shell prompt change!

root@ceph-mgr-mon01:/#You can run the ceph commands eg to check the Ceph status;

ceph -s cluster:

id: 456f0baa-affa-11ee-be1c-525400575614

health: HEALTH_WARN

OSD count 0 < osd_pool_default_size 3

services:

mon: 1 daemons, quorum ceph-mgr-mon01 (age 8m)

mgr: ceph-mgr-mon01.gioqld(active, since 7m)

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:

You can exit the ceph CLI by pressing Ctrl+D or type exit and press ENTER.

There are other ways in which you can access the Ceph CLI. For example, you can run Ceph CLI commands using cephadm command.

cephadm shell -- ceph -sOr You could install Ceph CLI tools on the host (ignore errors about cephadm user account);

apt install ceph-commonWith this method, then you can just ran the Ceph commands easily;

ceph -sCopy SSH Keys to Other Ceph Nodes

Copy the SSH key generated by the bootstrap command to Ceph Monitor, OSD1 and OSD2 root user account. Ensure Root Login is permitted on the Ceph monitor node.

for i in ceph-mon02 ceph-mon03 ceph-osd01 ceph-osd02 ceph-osd03; do ssh-copy-id -f -i /etc/ceph/ceph.pub root@$i; doneDrop into Ceph CLI

You can drop into the Ceph CLI to execute the next commands.

cephadm shellOr if you installed the ceph-common package, no need to drop into the cli as you can directly execute the ceph commands from the terminal.

Add Ceph Monitor Node to Ceph Cluster

At this point, we have just provisioned Ceph Admin node only. You can list all the hosts known to the Ceph ochestrator (ceph-mgr) using the command below

ceph orch host lsSample output;

HOST ADDR LABELS STATUS

ceph-mgr-mon01 192.168.122.170 _admin

1 hosts in cluster

So next, add the Ceph Monitor node to the cluster.

Assuming you have copied the Ceph SSH public key, execute the command below to add the Ceph Monitor to the cluster;

for i in 02 03; do ceph orch host add ceph-mon$i; doneSample command output;

Added host 'ceph-mon02' with addr '192.168.122.127'

Added host 'ceph-mon03' with addr '192.168.122.184'Next, label the nodes as per their roles;

ceph orch host label add ceph-mgr-mon01 ceph-mgr-mon01for i in 02 03; do ceph orch host label add ceph-mon$i mon$i; doneKindly note that if you have 5 or more nodes (including OSD nodes and admin nodes in the cluster within the same network, a maximum of 5 noded will be automatically assigned monitor roles). If you have nodes in different networks, a minimum of three monitors is recommended!

Similarly, there will be at least two managers deployed automatically.

Add Ceph OSD Nodes to Ceph Cluster

Similarly, add the OSD Nodes to the cluster;

for i in 01 02 03; do ceph orch host add ceph-osd$i; doneDefine their respective labels;

for i in 01 02 03; do ceph orch host label add ceph-osd$i osd$i; doneList Ceph Cluster Nodes;

You can list the Ceph cluster nodes;

ceph orch host lsSample output;

HOST ADDR LABELS STATUS

ceph-mgr-mon01 192.168.122.170 _admin,ceph-mgr-mon01

ceph-mon02 192.168.122.127 mon02

ceph-mon03 192.168.122.184 mon03

ceph-osd01 192.168.122.188 osd01

ceph-osd02 192.168.122.30 osd02

ceph-osd03 192.168.122.51 osd03

6 hosts in cluster

Create Ceph OSDs from OSD Nodes Drives

To create a Ceph OSD from the OSD node logical volumes, run the command below. Replace ceph-vg/ceph-lv with Volume group and logical volume names accordingly. Otherwise, use the raw device path.

sudo ceph orch daemon add osd ceph-mon:ceph-vg/ceph-lvCommand output;

Created osd(s) 0 on host 'ceph-mon'Repeat the same for the other OSD nodes.

sudo ceph orch daemon add osd ceph-osd1:ceph-vg/ceph-lvsudo ceph orch daemon add osd ceph-osd2:ceph-vg/ceph-lvThe Ceph OSDs are now ready for use.

In our setup, we have unallocated raw storage devices raw disks of 50G on each OSD node to be used as bluestore for OSD daemons.

You can list the devices that are available on the OSD nodes for creating OSDs using the command below;

ceph orch device lsA storage device is considered available if all of the following conditions are met:

- The device must have no partitions.

- The device must not have any LVM state.

- The device must not be mounted.

- The device must not contain a file system.

- The device must not contain a Ceph BlueStore OSD.

- The device must be larger than 5 GB.

Sample output;

HOST PATH TYPE DEVICE ID SIZE AVAILABLE REFRESHED REJECT REASONS

ceph-osd01 /dev/vda hdd 50.0G Yes 6m ago

ceph-osd01 /dev/vdb hdd 50.0G Yes 6m ago

ceph-osd02 /dev/vda hdd 50.0G Yes 5m ago

ceph-osd02 /dev/vdb hdd 50.0G Yes 5m ago

ceph-osd03 /dev/vda hdd 50.0G Yes 5m ago

ceph-osd03 /dev/vdb hdd 50.0G Yes 5m ago

You can add all the available devices to ceph OSDs at once or just add them one by one.

To attach them all at once;

ceph orch apply osd --all-available-devices --method {raw|lvm}Use raw method if you are using raw disks (like in our case here).

ceph orch apply osd --all-available-devices --method rawOtherwise, if you are using LVM volumes, use lvm method;

ceph orch apply osd --all-available-devices --method lvmCommand output;

Scheduled osd.all-available-devices update...Note that when you add devices using this approach, then;

- If you add new disks to the cluster, they will be automatically used to create new OSDs.

- In the event that an OSD is removed, and the LVM physical volume is cleaned, a new OSD will be generated automatically.

If you wish to prevent this behavior (i.e., disable the automatic creation of OSDs on available devices), use the 'unmanaged' parameter:

ceph orch apply osd --all-available-devices --unmanaged=trueTo manually create an OSD from a specific device on a specific host:

ceph orch daemon add osd <host>:<device-path>If you check again, the disks are now added to Ceph and not available for other use anymore;

ceph orch device lsHOST PATH TYPE DEVICE ID SIZE AVAILABLE REFRESHED REJECT REASONS

ceph-osd01 /dev/vda hdd 50.0G No 10s ago Has BlueStore device label

ceph-osd01 /dev/vdb hdd 50.0G No 10s ago Has BlueStore device label

ceph-osd02 /dev/vda hdd 50.0G No 10s ago Has BlueStore device label

ceph-osd02 /dev/vdb hdd 50.0G No 10s ago Has BlueStore device label

ceph-osd03 /dev/vda hdd 50.0G No 10s ago Has BlueStore device label

ceph-osd03 /dev/vdb hdd 50.0G No 10s ago Has BlueStore device label

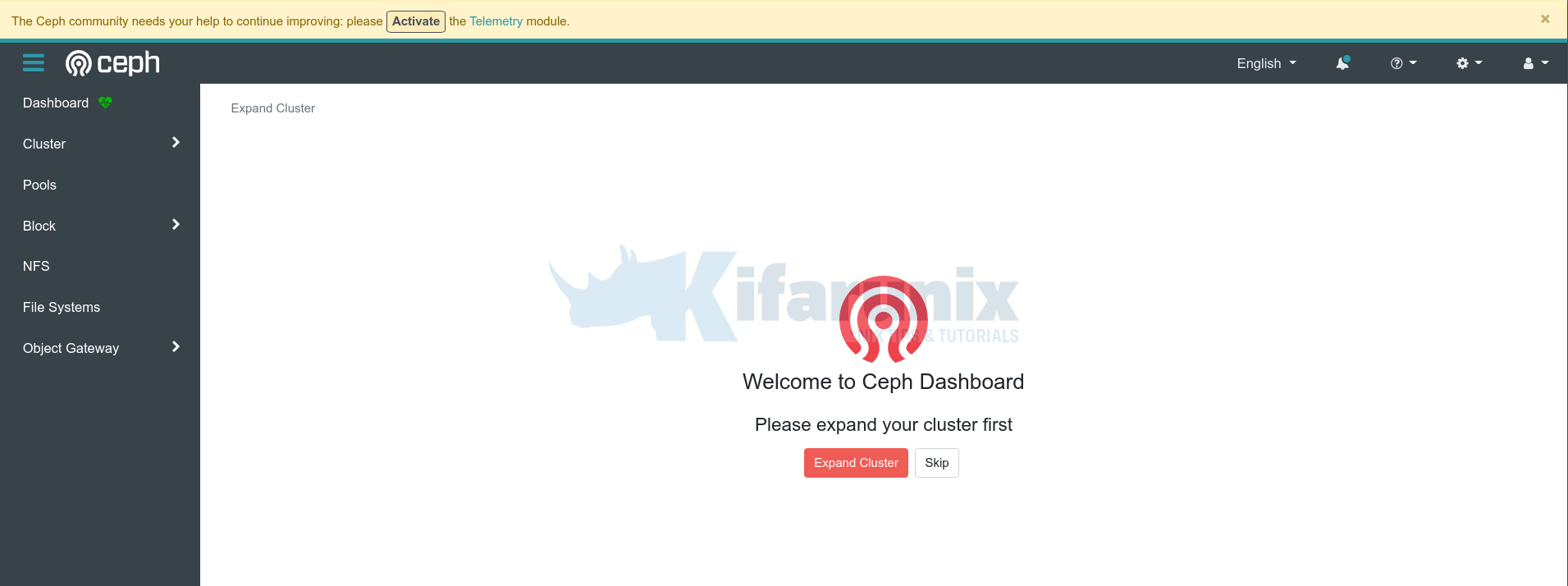

Check Ceph Cluster Health

To verify the health status of the ceph cluster, simply execute the command ceph s on the admin node or even on each OSD node (if you have installed cephadm/ceph commands there).

To check Ceph cluster health status from the admin node;

ceph -sSample output;

cluster:

id: 456f0baa-affa-11ee-be1c-525400575614

health: HEALTH_OK

services:

mon: 5 daemons, quorum ceph-mgr-mon01,ceph-mon02,ceph-mon03,ceph-osd01,ceph-osd03 (age 14m)

mgr: ceph-mgr-mon01.htboob(active, since 45m), standbys: ceph-mon02.wgbbcc

osd: 6 osds: 6 up (since 3m), 6 in (since 4m)

data:

pools: 1 pools, 1 pgs

objects: 2 objects, 577 KiB

usage: 160 MiB used, 300 GiB / 300 GiB avail

pgs: 1 active+clean

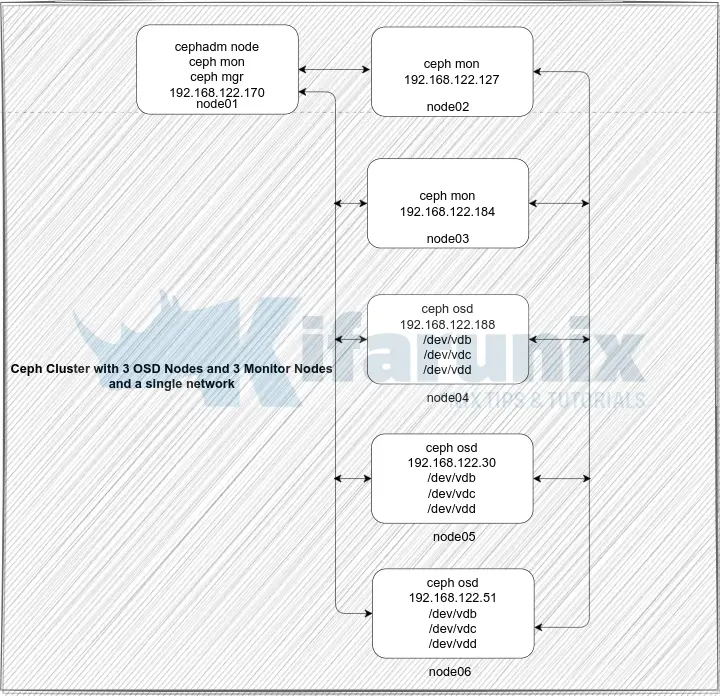

Accessing Ceph Admin Web User Interface

The bootstrap commands give a url and credentials to use to access the Ceph admin web user interface;

Ceph Dashboard is now available at:

URL: https://ceph-mgr-mon01:8443/

User: admin

Password: 0lquv02zaw

Thus, open the browser and navigate to the URL above. Or you can use the cephadm node resolvable hostname or IP address if you want. Sample URL: https://ceph-mgr-mon01:8443.

Open the port, 8443/TCP, on firewall if any is running.

Enter the provided credential and reset your admin password and proceed to login to Ceph Admin UI.

If you want, you can activate the telemetry module by clicking Activate button or just from the Ceph admin node CLI;

cephadm shell -- ceph telemetry on --license sharing-1-0Go through other Ceph menu to see more about Ceph.

Ceph Dashboard;

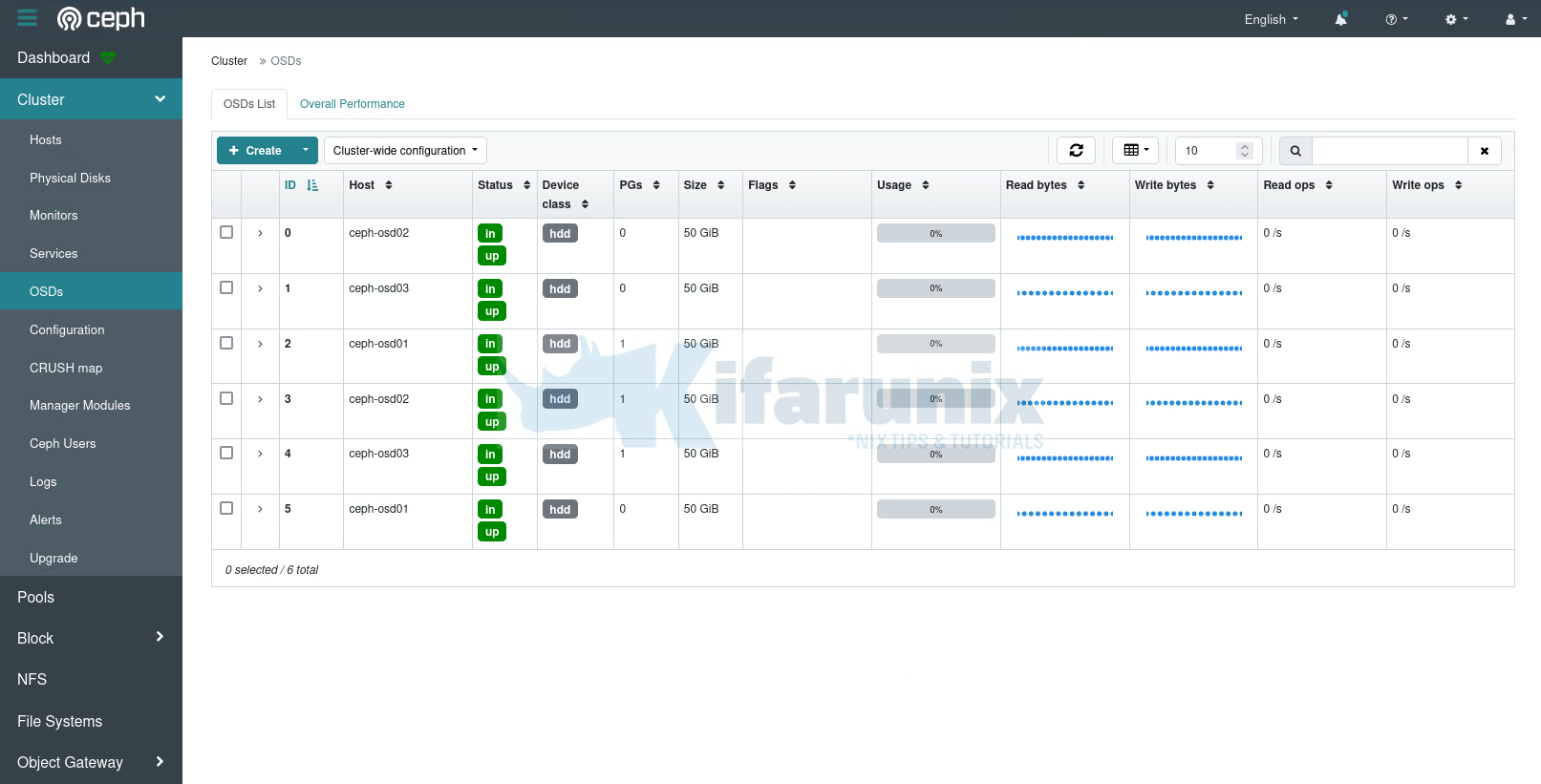

Under the Cluster Menu, you can see quite other details; hosts, disks, OSDs etc.

There you go. That marks the end of our tutorial on how to deploy Ceph storage cluster.