Follow through this guide to learn how to deploy ELK Stack 8 cluster on Docker containers. Deploying a multinode ELK Stack 8 cluster on Docker containers on separate nodes offers a flexible and scalable solution for managing distributed search and analytics workloads. Docker provides a lightweight and portable containerization environment, allowing Elastic components to be easily deployed and orchestrated across various environments. As of this writing, Elastic 8.12.0 is the current stable release.

Table of Contents

Deploying ELK Stack 8 Cluster on Docker Containers

We will be deploying a three node ELK Stack 8 cluster on Docker containers.

Note that a cluster typically involves multiple nodes working together to store and process data. In the context of Elastic, a cluster is formed when multiple Elasticsearch nodes join together to share the workload and provide high availability. If you have three Elasticsearch containers running on a single node, it’s still a single-node setup, not a cluster. A true Elasticsearch cluster would involve running multiple instances of Elasticsearch on separate nodes, allowing them to communicate and work together as a unified system.

Install Docker Engine

Depending on the your host system distribution, you need to install the Docker engine. You can link below to various guides to install Docker engine.

Checking Installed Docker version;

docker versionSample output;

Docker version 24.0.7, build afdd53bInstall Docker Compose

If you are running Elasticsearch cluster in Docker containers for test/development purposes, you can just create the containers using the docker run commands. Otherwise, use Docker composer to deploy production Elasticsearch containers!

Download and install Docker Compose on a Linux system. Be sure to replace the VER variable below with the value of the current stable release version of Docker compose.

Check the current stable release version of Docker Compose on their Github release page. As of this writing, the Docker Compose version 2.24.1 is the current stable release.

sudo su -VER=2.24.1curl -L "https://github.com/docker/compose/releases/download/v$VER/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-composeThis downloads docker compose tool to /usr/local/bin directory.

Make the Docker compose binary executable;

chmod +x /usr/local/bin/docker-composeYou should now be able to use Docker compose (docker-compose) on the CLI.

Check the version of installed Docker compose to confirm that it is working as expected.

docker-compose versionDocker Compose version v2.24.1Important Elasticsearch Settings

Disable Memory Swapping on a Container

There are different ways to disable swapping. In an Elasticsearch Docker container, you can simply set the value of the bootstrap.memory_lock option to true. With this option, you also need to ensure that there is no maximum limit imposed on the locked-in-memory address space (LimitMEMLOCK=infinity).

For a respective service, this is memory_lock limits are defined in a Docker compose file.

ulimits:

memlock:

soft: -1

hard: -1

You can confirm the default values set on a Docker image using the command below;

docker run --rm docker.elastic.co/elasticsearch/elasticsearch:8.11.4 /bin/bash -c 'ulimit -Hm && ulimit -Sm'Sample output;

unlimited

unlimited

If the value other than the required one is printed, then you have to set this via the Docker compose file to ensure that it set to appropriately for both Soft and Hard limits.

Set JVM Heap Size on All Cluster Nodes

Elasticsearch usually sets the heap size automatically based on the roles of the nodes’s roles and the total memory available to the node’s container. This is the recommended approach! For the Elasticsearch Docker containers, any custom JVM settings can be defined using the ES_JAVA_OPTS environment variable, either in compose file or on command line.

For example, you can set JVM heap size, Min and Max to 512M using the ES_JAVA_OPTS=-Xms512m -Xmx512m.

Set Maximum Open File Descriptor and Processes on Elasticsearch Containers

Set the maximum number of open files (nofile) for the containers to 65,536 and max number of processes to 4096 (both soft and hard limits).

You can use the command below to check the maximum number of user processes (-u) and max number of opens files (-n);

Replace the value of VER variable below with the current value of Elastic release!

VER=8.12.0docker run --rm docker.elastic.co/elasticsearch/elasticsearch:${VER} bash -c 'ulimit -Hn && ulimit -Sn && ulimit -Hu && ulimit -Su'Sample output;

1048576

1048576

unlimited

unlimited

These values may look like this on Docker compose file. Adjust them accordingly!

ulimits:

nofile:

soft: 65536

hard: 65536

nproc:

soft: 2048

hard: 2048

Update Virtual Memory Settings on All Cluster Nodes

Elasticsearch uses a mmapfs directory by default to store its indices. To ensure that you do not run out of virtual memory, edit the /etc/sysctl.conf on the Docker host and update the value of vm.max_map_count as shown below.

vm.max_map_count=262144On each of the cluster node, simply run the command below to configure virtual memory settings.

echo "vm.max_map_count=262144" >> /etc/sysctl.confTo apply the changes;

sysctl -pDeploy ELK Stack 8 on First Cluster Docker Host

Create a Network Share for Docker Volumes

In this setup, we are running ELK stack 8 containers on three separate nodes. As a result, there will be a number of files that we will need to share among the three containers on three different nodes. As much as there could other ways to share file on containers running on separate nodes, one of the option that became easy and achievable for me in this setup is the use of NFS shares to share volumes/files across the containers.

Hence, we have deployed an NFS server and mounted a directory with the files that needs to be shared across the ELK stack 8 cluster.

This is our NFS share export configuration;

cat /etc/exports/mnt/elkstack/ 192.168.122.152(rw,sync,no_root_squash,no_subtree_check) 192.168.122.123(rw,sync,no_root_squash,no_subtree_check) 192.168.122.60(rw,sync,no_root_squash,no_subtree_check)

As you can see, we create an NFS share under /mnt/elkstack and allow access from our ELK stack cluster nodes.

In this share, we have created the following directories:

/mnt/elkstack/certsfor ELK stack SSL/TLS certificates;/mnt/elkstack/configs/{logstash}for shared configuration files/mnt/elkstack/data/for Elasticsearch components data.elasticsearch/{01,02,03}kibana/{01,02,03}logstash/{01,02,03}

In the Logstash configuration directory, we have use the same pipeline configuration used in our previous guide. However, we adjusted the output to connect to multiple Elasticsearch outputs;

output {

elasticsearch {

hosts => ["https://${NODE1_NAME}:9200","https://${NODE2_NAME}:9200", "https://${NODE3_NAME}:9200"]

user => "${ELASTICSEARCH_USERNAME}"

password => "${ELASTICSEARCH_PASSWORD}"

ssl => true

cacert => "config/certs/ca/ca.crt"

Create Docker-Compose file for your Container Services

We will be using three nodes in this setup. On each node, we will run a single Docker container of ELK stack components, Elasticsearch 8, Logstash 8, Kibana 8. We will later on connect the Elasticsearch 8 containers together to make a cluster.

Thus, on the first node, let’s create a Docker compose file for our ELK stack.

mkdir elkstack-dockercd elkstack-dockervim docker-compose.ymlBelow is a sample Docker compose file configs for first node of our ELK Stack 8 Cluster Docker container.

version: '3.8'

services:

es_setup:

image: docker.elastic.co/elasticsearch/elasticsearch:${STACK_VERSION}

volumes:

- certs:/usr/share/elasticsearch/config/certs

user: "0"

command: >

bash -c '

echo "Creating ES certs directory..."

[[ -d config/certs ]] || mkdir config/certs

# Check if CA certificate exists

if [ ! -f config/certs/ca/ca.crt ]; then

echo "Generating Wildcard SSL certs for ES (in PEM format)..."

bin/elasticsearch-certutil ca --pem --days 3650 --out config/certs/elkstack-ca.zip

unzip -d config/certs config/certs/elkstack-ca.zip

bin/elasticsearch-certutil cert \

--name elkstack-certs \

--ca-cert config/certs/ca/ca.crt \

--ca-key config/certs/ca/ca.key \

--pem \

--dns "*.${DOMAIN_SUFFIX},localhost,${NODE1_NAME},${NODE2_NAME},${NODE3_NAME}" \

--ip 192.168.122.60 \

--ip 192.168.122.123 \

--ip 192.168.122.152 \

--days ${DAYS} \

--out config/certs/elkstack-certs.zip

unzip -d config/certs config/certs/elkstack-certs.zip

else

echo "CA certificate already exists. Skipping Certificates generation."

fi

# Check if Elasticsearch is ready

until curl -s --cacert config/certs/ca/ca.crt -u "elastic:${ELASTIC_PASSWORD}" https://${NODE1_NAME}:9200 | grep -q "${CLUSTER_NAME}"; do sleep 10; done

# Set kibana_system password

if curl -sk -XGET --cacert config/certs/ca/ca.crt "https://${NODE1_NAME}:9200" -u "kibana_system:${KIBANA_PASSWORD}" | grep -q "${CLUSTER_NAME}"; then

echo "Password for kibana_system is working. Proceeding with Elasticsearch setup for kibana_system."

else

echo "Failed to authenticate with kibana_system password. Trying to set the password for kibana_system."

until curl -s -XPOST --cacert config/certs/ca/ca.crt -u "elastic:${ELASTIC_PASSWORD}" -H "Content-Type: application/json" https://${NODE1_NAME}:9200/_security/user/kibana_system/_password -d "{\"password\":\"${KIBANA_PASSWORD}\"}" | grep -q "^{}"; do sleep 10; done

fi

echo "Setup is done!"

'

networks:

- elastic

healthcheck:

test: ["CMD-SHELL", "[ -f config/certs/elkstack-certs/elkstack-certs.crt ]"]

interval: 1s

timeout: 5s

retries: 120

elasticsearch:

depends_on:

es_setup:

condition: service_healthy

container_name: ${NODE1_NAME}

image: docker.elastic.co/elasticsearch/elasticsearch:${STACK_VERSION}

environment:

- node.name=${NODE1_NAME}

- network.publish_host=${NODE1}

- cluster.name=${CLUSTER_NAME}

- bootstrap.memory_lock=true

- "ES_JAVA_OPTS=-Xms1g -Xmx1g"

- ELASTIC_PASSWORD=${ELASTIC_PASSWORD}

- xpack.security.enabled=true

- xpack.security.http.ssl.enabled=true

- xpack.security.transport.ssl.enabled=true

- xpack.security.enrollment.enabled=false

- xpack.security.autoconfiguration.enabled=false

- xpack.security.http.ssl.key=certs/elkstack-certs/elkstack-certs.key

- xpack.security.http.ssl.certificate=certs/elkstack-certs/elkstack-certs.crt

- xpack.security.http.ssl.certificate_authorities=certs/ca/ca.crt

- xpack.security.transport.ssl.key=certs/elkstack-certs/elkstack-certs.key

- xpack.security.transport.ssl.certificate=certs/elkstack-certs/elkstack-certs.crt

- xpack.security.transport.ssl.certificate_authorities=certs/ca/ca.crt

- cluster.initial_master_nodes=${NODE1_NAME},${NODE2_NAME},${NODE3_NAME}

- discovery.seed_hosts=192.168.122.60,192.168.122.123,192.168.122.152

- KIBANA_USERNAME=${KIBANA_USERNAME}

- KIBANA_PASSWORD=${KIBANA_PASSWORD}

ulimits:

memlock:

soft: -1

hard: -1

volumes:

- elasticsearch_data:/usr/share/elasticsearch/data

- certs:/usr/share/elasticsearch/config/certs

- /etc/hosts:/etc/hosts

ports:

- 9200:9200

- 9300:9300

networks:

- elastic

healthcheck:

test: ["CMD-SHELL", "curl --fail -k -s -u elastic:${ELASTIC_PASSWORD} --cacert config/certs/ca/ca.crt https://${NODE1_NAME}:9200"]

interval: 30s

timeout: 10s

retries: 5

restart: unless-stopped

kibana:

image: docker.elastic.co/kibana/kibana:${STACK_VERSION}

container_name: kibana

environment:

- SERVER_NAME=${KIBANA_SERVER_HOST}

- ELASTICSEARCH_HOSTS=https://${NODE1_NAME}:9200

- ELASTICSEARCH_SSL_CERTIFICATEAUTHORITIES=config/certs/ca/ca.crt

- ELASTICSEARCH_USERNAME=${KIBANA_USERNAME}

- ELASTICSEARCH_PASSWORD=${KIBANA_PASSWORD}

- XPACK_REPORTING_ROLES_ENABLED=false

- XPACK_REPORTING_KIBANASERVER_HOSTNAME=localhost

- XPACK_ENCRYPTEDSAVEDOBJECTS_ENCRYPTIONKEY=${SAVEDOBJECTS_ENCRYPTIONKEY}

- XPACK_SECURITY_ENCRYPTIONKEY=${REPORTING_ENCRYPTIONKEY}

- XPACK_REPORTING_ENCRYPTIONKEY=${SECURITY_ENCRYPTIONKEY}

volumes:

- kibana_data:/usr/share/kibana/data

- certs:/usr/share/kibana/config/certs

- /etc/hosts:/etc/hosts

ports:

- ${KIBANA_PORT}:5601

networks:

- elastic

depends_on:

elasticsearch:

condition: service_healthy

restart: unless-stopped

logstash:

image: docker.elastic.co/logstash/logstash:${STACK_VERSION}

container_name: logstash

environment:

- XPACK_MONITORING_ENABLED=false

- ELASTICSEARCH_USERNAME=${ES_USER}

- ELASTICSEARCH_PASSWORD=${ELASTIC_PASSWORD}

- NODE_NAME=${NODE1_NAME}

ports:

- 5044:5044

volumes:

- logstash_filters:/usr/share/logstash/pipeline/:ro

- certs:/usr/share/logstash/config/certs

- logstash_data:/usr/share/logstash/data

- /etc/hosts:/etc/hosts

networks:

- elastic

depends_on:

elasticsearch:

condition: service_healthy

restart: unless-stopped

volumes:

certs:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/certs"

elasticsearch_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/elasticsearch/01"

kibana_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/kibana/01"

logstash_filters:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/configs/logstash"

logstash_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/logstash/01"

networks:

elastic:

Where:

es_setupService:- Image: Uses the official Elasticsearch Docker image with a specified version (

${STACK_VERSION}). - Volumes: Mounts a volume named

certsto the Elasticsearch configuration directory. - User: Sets the user to root (user ID 0).

- Command: Shell script for setting up Elasticsearch certificates, checking cluster readiness, and setting the

kibana_systempassword. - Networks: Connects to the

elasticnetwork. - Healthcheck: Uses a custom health check to test the existence of a specific file (

config/certs/elkstack-certs/elkstack-certs.crt).

- Image: Uses the official Elasticsearch Docker image with a specified version (

elasticsearchService:- Depends On: Depends on the

es_setupservice with the condition thates_setupis healthy. - Container Name: Specifies a custom name for the Elasticsearch container (

${NODE1_NAME}). - Image: Uses the official Elasticsearch Docker image with the specified version (

${STACK_VERSION}). - Environment: Configures various environment variables for Elasticsearch, such as node name, network settings, security, etc.

- Ulimits: Sets memory lock limits for Elasticsearch.

- Volumes: Mounts volumes for Elasticsearch data, certificates, and the hosts file.

- Ports: Exposes ports 9200 and 9300.

- Networks: Connects to the

elasticnetwork. - Healthcheck: Uses a custom health check to check Elasticsearch availability.

- Depends On: Depends on the

kibanaService:

- Image: Uses the official Kibana Docker image with the specified version (

${STACK_VERSION}). - Container Name: Specifies a custom name for the Kibana container (

kibana). - Environment: Configures environment variables for Kibana, including Elasticsearch connection details and security settings.

- Volumes: Mounts volumes for Kibana data, certificates, and the hosts file.

- Ports: Exposes the specified port for Kibana.

- Networks: Connects to the

elasticnetwork. - Depends On: Depends on the

elasticsearchservice with the condition thatelasticsearchis healthy.

LogstashService:- Image: Uses the official Logstash Docker image with the specified version (

${STACK_VERSION}). - Container Name: Specifies a custom name for the Logstash container (

logstash). - Environment: Configures environment variables for Logstash, including Elasticsearch connection details and security settings.

- Ports: Exposes port 5044 for Logstash.

- Volumes: Mounts volumes for Logstash filters, certificates, and Logstash data.

- Networks: Connects to the

elasticnetwork. - Depends On: Depends on the

elasticsearchservice with the condition thatelasticsearchis healthy.

- Image: Uses the official Logstash Docker image with the specified version (

- Volumes:

- Defines multiple volumes for storing certificates, Elasticsearch data, Kibana data, Logstash filters, and Logstash data. These volumes are configured to use NFS.

- Networks:

- Defines a custom network named

elasticfor connecting the services.

- Defines a custom network named

All the environment variables used are defined in the .env file.

cat .env# Version of Elastic products

STACK_VERSION=8.12.0

# Set the cluster name

CLUSTER_NAME=elk-docker-cluster

# Set Elasticsearch Node Name

NODE1_NAME=es01

NODE2_NAME=es02

NODE3_NAME=es03

# Docker Host IP to advertise to cluster nodes

NODE1=192.168.122.60

NODE2=192.168.122.123

NODE3=192.168.122.152

# Elasticsearch super user

ES_USER=elastic

# Password for the 'elastic' user (at least 6 characters). No special characters, ! or @ or $.

ELASTIC_PASSWORD=ChangeME

# Elasticsearch container name

ES_NAME=elasticsearch

# Port to expose Elasticsearch HTTP API to the host

ES_PORT=9200

#ES_PORT=127.0.0.1:9200

# Port to expose Kibana to the host

KIBANA_PORT=5601

KIBANA_SERVER_HOST=localhost

# Kibana Encryption. Requires atleast 32 characters. Can be generated using `openssl rand -hex 16

SAVEDOBJECTS_ENCRYPTIONKEY=ca11560aec8410ff002d011c2a172608

REPORTING_ENCRYPTIONKEY=288f06b3a14a7f36dd21563d50ec76d4

SECURITY_ENCRYPTIONKEY=62c781d3a2b2eaee1d4cebcc6bf42b48

# Kibana - Elasticsearch Authentication Credentials for user kibana_system

# Password for the 'kibana_system' user (at least 6 characters). No special characters, ! or @ or $.

KIBANA_USERNAME=kibana_system

KIBANA_PASSWORD=ChangeME

# Domain Suffix for ES Wildcard SSL certs

DOMAIN_SUFFIX=kifarunix-demo.com

# Generated Certs Validity Period

DAYS=3650

# Logstash Input Port

LS_PORT=5044

Similarly, create on the other nodes, create Docker compose file and respective environment variables file. We will use same environment variables across the nodes!

One the second node;

cat docker-compose.ymlservices:

elasticsearch:

container_name: ${NODE2_NAME}

image: docker.elastic.co/elasticsearch/elasticsearch:${STACK_VERSION}

command: >

bash -c '

until [ -f "config/certs/elkstack-certs/elkstack-certs.crt" ]; do

sleep 10;

done;

exec /usr/local/bin/docker-entrypoint.sh

'

environment:

- node.name=${NODE2_NAME}

- network.publish_host=${NODE2}

- cluster.name=${CLUSTER_NAME}

- bootstrap.memory_lock=true

- cluster.initial_master_nodes=${NODE1_NAME},${NODE2_NAME},${NODE3_NAME}

- discovery.seed_hosts=${NODE1},${NODE2},${NODE3}

- "ES_JAVA_OPTS=-Xms1g -Xmx1g"

- ELASTIC_PASSWORD=${ELASTIC_PASSWORD}

- xpack.security.enabled=true

- xpack.security.http.ssl.enabled=true

- xpack.security.enrollment.enabled=false

- xpack.security.autoconfiguration.enabled=false

- xpack.security.transport.ssl.enabled=true

- xpack.security.http.ssl.key=certs/elkstack-certs/elkstack-certs.key

- xpack.security.http.ssl.certificate=certs/elkstack-certs/elkstack-certs.crt

- xpack.security.http.ssl.certificate_authorities=certs/ca/ca.crt

- xpack.security.transport.ssl.key=certs/elkstack-certs/elkstack-certs.key

- xpack.security.transport.ssl.certificate=certs/elkstack-certs/elkstack-certs.crt

- xpack.security.transport.ssl.certificate_authorities=certs/ca/ca.crt

- KIBANA_USERNAME=${KIBANA_USERNAME}

- KIBANA_PASSWORD=${KIBANA_PASSWORD}

ulimits:

memlock:

soft: -1

hard: -1

volumes:

- elasticsearch_data:/usr/share/elasticsearch/data

- certs:/usr/share/elasticsearch/config/certs

- /etc/hosts:/etc/hosts

ports:

- 9200:9200

- 9300:9300

networks:

- elastic

healthcheck:

test: ["CMD-SHELL", "curl --fail -k -s -u elastic:${ELASTIC_PASSWORD} --cacert config/certs/ca/ca.crt https://${NODE2_NAME}:9200"]

interval: 30s

timeout: 10s

retries: 5

restart: unless-stopped

kibana:

image: docker.elastic.co/kibana/kibana:${STACK_VERSION}

container_name: kibana02

environment:

- SERVER_NAME=${KIBANA_SERVER_HOST}

- ELASTICSEARCH_HOSTS=https://${NODE2_NAME}:9200

- ELASTICSEARCH_SSL_CERTIFICATEAUTHORITIES=config/certs/ca/ca.crt

- ELASTICSEARCH_USERNAME=${KIBANA_USERNAME}

- ELASTICSEARCH_PASSWORD=${KIBANA_PASSWORD}

- XPACK_REPORTING_ROLES_ENABLED=false

- XPACK_REPORTING_KIBANASERVER_HOSTNAME=localhost

- XPACK_ENCRYPTEDSAVEDOBJECTS_ENCRYPTIONKEY=${SAVEDOBJECTS_ENCRYPTIONKEY}

- XPACK_SECURITY_ENCRYPTIONKEY=${REPORTING_ENCRYPTIONKEY}

- XPACK_REPORTING_ENCRYPTIONKEY=${SECURITY_ENCRYPTIONKEY}

volumes:

- kibana_data:/usr/share/kibana/data

- certs:/usr/share/kibana/config/certs

- /etc/hosts:/etc/hosts

ports:

- ${KIBANA_PORT}:5601

networks:

- elastic

depends_on:

elasticsearch:

condition: service_healthy

restart: unless-stopped

logstash:

image: docker.elastic.co/logstash/logstash:${STACK_VERSION}

container_name: logstash02

environment:

- XPACK_MONITORING_ENABLED=false

- ELASTICSEARCH_USERNAME=${ES_USER}

- ELASTICSEARCH_PASSWORD=${ELASTIC_PASSWORD}

- NODE_NAME=${NODE2_NAME}

ports:

- 5044:5044

volumes:

- certs:/usr/share/logstash/config/certs

- logstash_filters:/usr/share/logstash/pipeline/:ro

- logstash_data:/usr/share/logstash/data

- /etc/hosts:/etc/hosts

networks:

- elastic

depends_on:

elasticsearch:

condition: service_healthy

restart: unless-stopped

volumes:

certs:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,ro"

device: ":/mnt/elkstack/certs"

elasticsearch_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/elasticsearch/02"

kibana_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/kibana/02"

logstash_filters:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,ro"

device: ":/mnt/elkstack/configs/logstash"

logstash_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/logstash/02"

networks:

elastic:

cat .env# Version of Elastic products

STACK_VERSION=8.12.0

# Set the cluster name

CLUSTER_NAME=elk-docker-cluster

# Set Elasticsearch Node Name

NODE1_NAME=es01

NODE2_NAME=es02

NODE3_NAME=es03

# Docker Host IP to advertise to cluster nodes

NODE1=192.168.122.60

NODE2=192.168.122.123

NODE3=192.168.122.152

# Elasticsearch super user

ES_USER=elastic

# Password for the 'elastic' user (at least 6 characters). No special characters, ! or @ or $.

ELASTIC_PASSWORD=ChangeME

# Elasticsearch container name

ES_NAME=elasticsearch

# Port to expose Elasticsearch HTTP API to the host

ES_PORT=9200

#ES_PORT=127.0.0.1:9200

# Port to expose Kibana to the host

KIBANA_PORT=5601

KIBANA_SERVER_HOST=localhost

# Kibana Encryption. Requires atleast 32 characters. Can be generated using `openssl rand -hex 16

SAVEDOBJECTS_ENCRYPTIONKEY=ca11560aec8410ff002d011c2a172608

REPORTING_ENCRYPTIONKEY=288f06b3a14a7f36dd21563d50ec76d4

SECURITY_ENCRYPTIONKEY=62c781d3a2b2eaee1d4cebcc6bf42b48

# Kibana - Elasticsearch Authentication Credentials for user kibana_system

# Password for the 'kibana_system' user (at least 6 characters). No special characters, ! or @ or $.

KIBANA_USERNAME=kibana_system

KIBANA_PASSWORD=ChangeME

# Domain Suffix for ES Wildcard SSL certs

DOMAIN_SUFFIX=kifarunix-demo.com

# Generated Certs Validity Period

DAYS=3650

# Logstash Input Port

LS_PORT=5044

On the third node;

cat docker-compose.ymlservices:

elasticsearch:

container_name: ${NODE3_NAME}

image: docker.elastic.co/elasticsearch/elasticsearch:${STACK_VERSION}

command: >

bash -c '

until [ -f "config/certs/elkstack-certs/elkstack-certs.crt" ]; do

sleep 10;

done;

exec /usr/local/bin/docker-entrypoint.sh

'

environment:

- node.name=${NODE3_NAME}

- network.publish_host=${NODE3}

- cluster.name=${CLUSTER_NAME}

- bootstrap.memory_lock=true

- cluster.initial_master_nodes=${NODE1_NAME},${NODE2_NAME},${NODE3_NAME}

- discovery.seed_hosts=${NODE1},${NODE2},${NODE3}

- "ES_JAVA_OPTS=-Xms1g -Xmx1g"

- ELASTIC_PASSWORD=${ELASTIC_PASSWORD}

- xpack.security.enabled=true

- xpack.security.http.ssl.enabled=true

- xpack.security.transport.ssl.enabled=true

- xpack.security.enrollment.enabled=false

- xpack.security.autoconfiguration.enabled=false

- xpack.security.http.ssl.key=certs/elkstack-certs/elkstack-certs.key

- xpack.security.http.ssl.certificate=certs/elkstack-certs/elkstack-certs.crt

- xpack.security.http.ssl.certificate_authorities=certs/ca/ca.crt

- xpack.security.transport.ssl.key=certs/elkstack-certs/elkstack-certs.key

- xpack.security.transport.ssl.certificate=certs/elkstack-certs/elkstack-certs.crt

- xpack.security.transport.ssl.certificate_authorities=certs/ca/ca.crt

- KIBANA_USERNAME=${KIBANA_USERNAME}

- KIBANA_PASSWORD=${KIBANA_PASSWORD}

ulimits:

memlock:

soft: -1

hard: -1

volumes:

- elasticsearch_data:/usr/share/elasticsearch/data

- certs:/usr/share/elasticsearch/config/certs

- /etc/hosts:/etc/hosts

ports:

- 9200:9200

- 9300:9300

networks:

- elastic

healthcheck:

test: ["CMD-SHELL", "curl --fail -k -s -u elastic:${ELASTIC_PASSWORD} --cacert config/certs/ca/ca.crt https://${NODE3_NAME}:9200"]

interval: 30s

timeout: 10s

retries: 5

restart: unless-stopped

kibana:

image: docker.elastic.co/kibana/kibana:${STACK_VERSION}

container_name: kibana

environment:

- SERVER_NAME=${KIBANA_SERVER_HOST}

- ELASTICSEARCH_HOSTS=https://${NODE3_NAME}:9200

- ELASTICSEARCH_SSL_CERTIFICATEAUTHORITIES=config/certs/ca/ca.crt

- ELASTICSEARCH_USERNAME=${KIBANA_USERNAME}

- ELASTICSEARCH_PASSWORD=${KIBANA_PASSWORD}

- XPACK_REPORTING_ROLES_ENABLED=false

- XPACK_REPORTING_KIBANASERVER_HOSTNAME=localhost

- XPACK_ENCRYPTEDSAVEDOBJECTS_ENCRYPTIONKEY=${SAVEDOBJECTS_ENCRYPTIONKEY}

- XPACK_SECURITY_ENCRYPTIONKEY=${REPORTING_ENCRYPTIONKEY}

- XPACK_REPORTING_ENCRYPTIONKEY=${SECURITY_ENCRYPTIONKEY}

volumes:

- kibana_data:/usr/share/kibana/data

- certs:/usr/share/kibana/config/certs

- /etc/hosts:/etc/hosts

ports:

- ${KIBANA_PORT}:5601

networks:

- elastic

depends_on:

elasticsearch:

condition: service_healthy

restart: unless-stopped

logstash:

image: docker.elastic.co/logstash/logstash:${STACK_VERSION}

container_name: logstash

environment:

- XPACK_MONITORING_ENABLED=false

- ELASTICSEARCH_USERNAME=${ES_USER}

- ELASTICSEARCH_PASSWORD=${ELASTIC_PASSWORD}

- NODE_NAME=${NODE3_NAME}

ports:

- 5044:5044

volumes:

- certs:/usr/share/logstash/config/certs

- logstash_filters:/usr/share/logstash/pipeline/:ro

- logstash_data:/usr/share/logstash/data

- /etc/hosts:/etc/hosts

networks:

- elastic

depends_on:

elasticsearch:

condition: service_healthy

restart: unless-stopped

volumes:

certs:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,ro"

device: ":/mnt/elkstack/certs"

elasticsearch_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/elasticsearch/03"

kibana_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/kibana/03"

logstash_filters:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,ro"

device: ":/mnt/elkstack/configs/logstash"

logstash_data:

driver: local

driver_opts:

type: nfs

o: "addr=192.168.122.60,nfsvers=4,rw"

device: ":/mnt/elkstack/data/logstash/03"

networks:

elastic:

cat .env# Version of Elastic products

STACK_VERSION=8.12.0

# Set the cluster name

CLUSTER_NAME=elk-docker-cluster

# Set Elasticsearch Node Name

NODE1_NAME=es01

NODE2_NAME=es02

NODE3_NAME=es03

# Docker Host IP to advertise to cluster nodes

NODE1=192.168.122.60

NODE2=192.168.122.123

NODE3=192.168.122.152

# Elasticsearch super user

ES_USER=elastic

# Password for the 'elastic' user (at least 6 characters). No special characters, ! or @ or $.

ELASTIC_PASSWORD=ChangeME

# Elasticsearch container name

ES_NAME=elasticsearch

# Port to expose Elasticsearch HTTP API to the host

ES_PORT=9200

#ES_PORT=127.0.0.1:9200

# Port to expose Kibana to the host

KIBANA_PORT=5601

KIBANA_SERVER_HOST=localhost

# Kibana Encryption. Requires atleast 32 characters. Can be generated using `openssl rand -hex 16

SAVEDOBJECTS_ENCRYPTIONKEY=ca11560aec8410ff002d011c2a172608

REPORTING_ENCRYPTIONKEY=288f06b3a14a7f36dd21563d50ec76d4

SECURITY_ENCRYPTIONKEY=62c781d3a2b2eaee1d4cebcc6bf42b48

# Kibana - Elasticsearch Authentication Credentials for user kibana_system

# Password for the 'kibana_system' user (at least 6 characters). No special characters, ! or @ or $.

KIBANA_USERNAME=kibana_system

KIBANA_PASSWORD=ChangeME

# Domain Suffix for ES Wildcard SSL certs

DOMAIN_SUFFIX=kifarunix-demo.com

# Generated Certs Validity Period

DAYS=3650

# Logstash Input Port

LS_PORT=5044

Validate Docker Compose Files

It is important to check the syntax, environment variables, and other settings in your Docker compose file. This can be done using the command;

docker-compose configOr;

docker compose configThe command will:

- Validate the syntax of the

Docker composefile. - Parse the file and merge the configuration from all relevant sources (e.g., environment variables, variable interpolation).

- Output the final, resolved configuration.

Start ELK Stack 8 Docker Containers

Once you have everything in place, then it is time to start the containers.

Note, in our setup, we have to start the containers on the first on the first node. This is because, it is from this node that we are generating the shared SSL/TLS certs required for the cluster.

Thus, having confirmed all is set to go, fire up!

docker-compose up -d[+] Running 37/26

✔ kibana 12 layers [⣿⣿⣿⣿⣿⣿⣿⣿⣿⣿⣿⣿] 0B/0B Pulled 31.9s

✔ es_setup Pulled 25.4s

✔ elasticsearch 10 layers [⣿⣿⣿⣿⣿⣿⣿⣿⣿⣿] 0B/0B Pulled 25.4s

✔ logstash 11 layers [⣿⣿⣿⣿⣿⣿⣿⣿⣿⣿⣿] 0B/0B Pulled 24.8s

...

[+] Running 10/10

✔ Network elkstack-docker_elastic Created 0.1s

✔ Volume "elkstack-docker_elasticsearch_data" Created 0.0s

✔ Volume "elkstack-docker_kibana_data" Created 0.0s

✔ Volume "elkstack-docker_certs" Created 0.0s

✔ Volume "elkstack-docker_logstash_filters" Created 0.0s

✔ Volume "elkstack-docker_logstash_data" Created 0.0s

✔ Container elkstack-docker-es_setup-1 Healthy 0.6s

✔ Container es01 Healthy 0.2s

✔ Container kibana Started 0.2s

✔ Container logstash Started

Immediately the containers on the first node run, login to the two other nodes and laucnh the containers;

Node2/Node3;

docker-compose up -d[+] Running 9/9

✔ Network elkstack-docker_elastic Created 0.1s

✔ Volume "elkstack-docker_certs" Created 0.0s

✔ Volume "elkstack-docker_kibana_data" Created 0.0s

✔ Volume "elkstack-docker_logstash_filters" Created 0.0s

✔ Volume "elkstack-docker_logstash_data" Created 0.0s

✔ Volume "elkstack-docker_elasticsearch_data" Created 0.0s

✔ Container es02 Healthy 0.3s

✔ Container kibana02 Started 0.1s

✔ Container logstash02 Started

If everything goes well, then your three node ELK Stack 8 cluster on Docker containers should be up now!

Check containers running on first node;

docker psCONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

22fc737061c6 docker.elastic.co/logstash/logstash:8.12.0 "/usr/local/bin/dock…" 4 minutes ago Up 3 minutes 0.0.0.0:5044->5044/tcp, :::5044->5044/tcp, 9600/tcp logstash

e71f04eefbb4 docker.elastic.co/kibana/kibana:8.12.0 "/bin/tini -- /usr/l…" 4 minutes ago Up 3 minutes 0.0.0.0:5601->5601/tcp, :::5601->5601/tcp kibana

8e623c5e277e docker.elastic.co/elasticsearch/elasticsearch:8.12.0 "/bin/tini -- /usr/l…" 4 minutes ago Up 4 minutes (healthy) 0.0.0.0:9200->9200/tcp, :::9200->9200/tcp, 0.0.0.0:9300->9300/tcp, :::9300->9300/tcp es01

You do the same on other nodes.

Verify the ELK Stack Cluster

Now, check if the cluster is up and running.

docker exec -it es01 bash -c 'curl -sk -XGET https://es01:9200/_cat/nodes?pretty -u elastic'192.168.122.152 15 98 6 0.13 0.54 0.34 dm - es03

192.168.122.123 51 98 8 0.58 0.74 0.43 dm * es02

192.168.122.60 28 98 16 1.72 1.88 1.10 dm - es01

Hurray! We have the cluster up and running! Check, container on node 2 is the master now!

You can also check individual container logs under /var/lib/docker/containers/.

sudo tail -f /var/lib/docker/containers/*/*.logYou can replace asterisk with individual containers id to check each’s container logs.

You can also grep keywords like added|master node|elected-as in the logs;

grep -irE --color 'added|master node|elected-as' /var/lib/docker/containers/*/*.logCheck the ports exposed by the services on the host;

ss -atlnpState Recv-Q Send-Q Local Address:Port Peer Address:PortProcess

LISTEN 0 4096 0.0.0.0:5601 0.0.0.0:* users:(("docker-proxy",pid=2945394,fd=4))

LISTEN 0 4096 0.0.0.0:9300 0.0.0.0:* users:(("docker-proxy",pid=2944085,fd=4))

LISTEN 0 128 0.0.0.0:22 0.0.0.0:* users:(("sshd",pid=710,fd=3))

LISTEN 0 4096 0.0.0.0:5044 0.0.0.0:* users:(("docker-proxy",pid=2945415,fd=4))

LISTEN 0 4096 0.0.0.0:9200 0.0.0.0:* users:(("docker-proxy",pid=2944108,fd=4))

LISTEN 0 4096 [::]:5601 [::]:* users:(("docker-proxy",pid=2945401,fd=4))

LISTEN 0 4096 [::]:9300 [::]:* users:(("docker-proxy",pid=2944092,fd=4))

LISTEN 0 128 [::]:22 [::]:* users:(("sshd",pid=710,fd=4))

LISTEN 0 4096 [::]:5044 [::]:* users:(("docker-proxy",pid=2945421,fd=4))

LISTEN 0 4096 [::]:9200 [::]:* users:(("docker-proxy",pid=2944115,fd=4))

Accessing Kibana

Note that in our setup, we are running complete ELK on the three nodes. However, the Elasticsearch is what configured to be in cluster. Hence, you can access the Kibana on any node IP address and still access the same data.

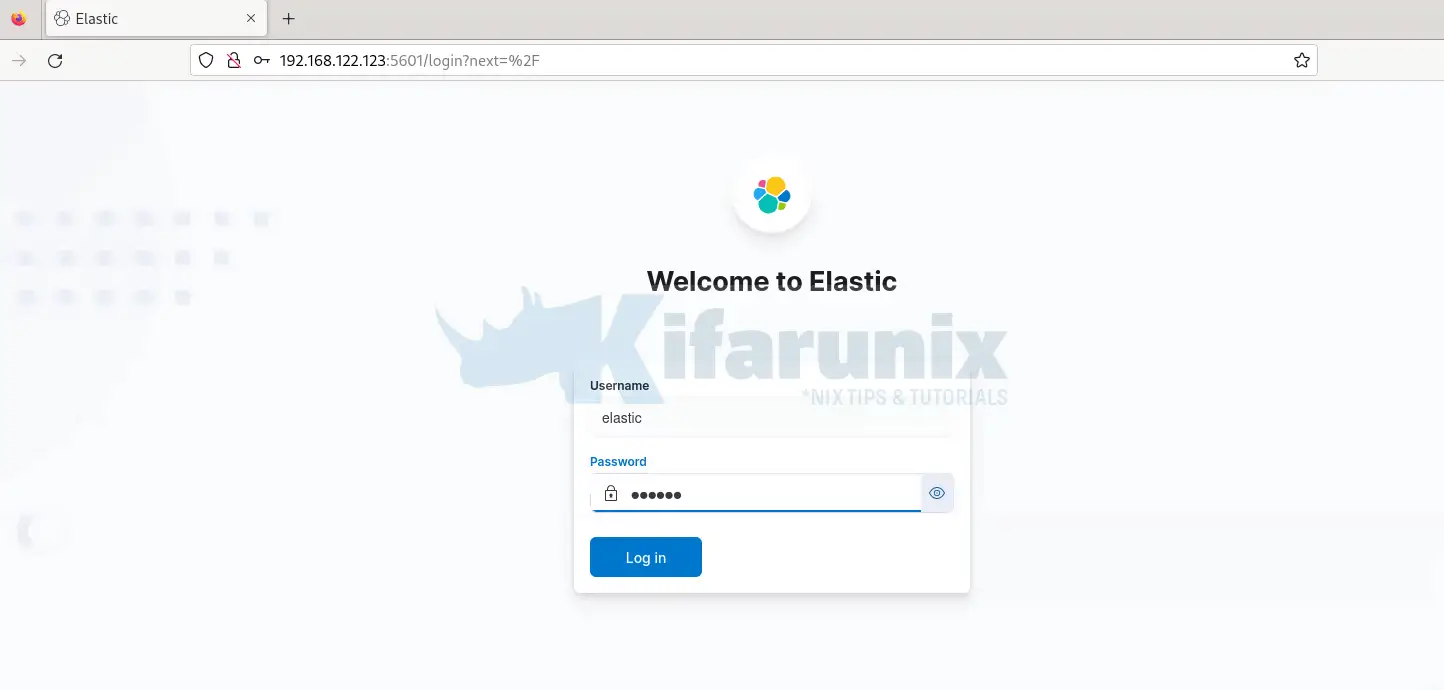

Let’s check Kibana on the first node, http://192.168.122.60:5601.

We are using the superuser credentials to login!

Explore ELK stack 8 cluster dashboards!

Sending Data Logs to Elastic Stack

Since we configured our Logstash receive event data from the Beats on port 5044/tcp, we will configure Filebeat to forward events to any of the three

We already covered how to install and configure Filebeat to forward event data in our previous guides;

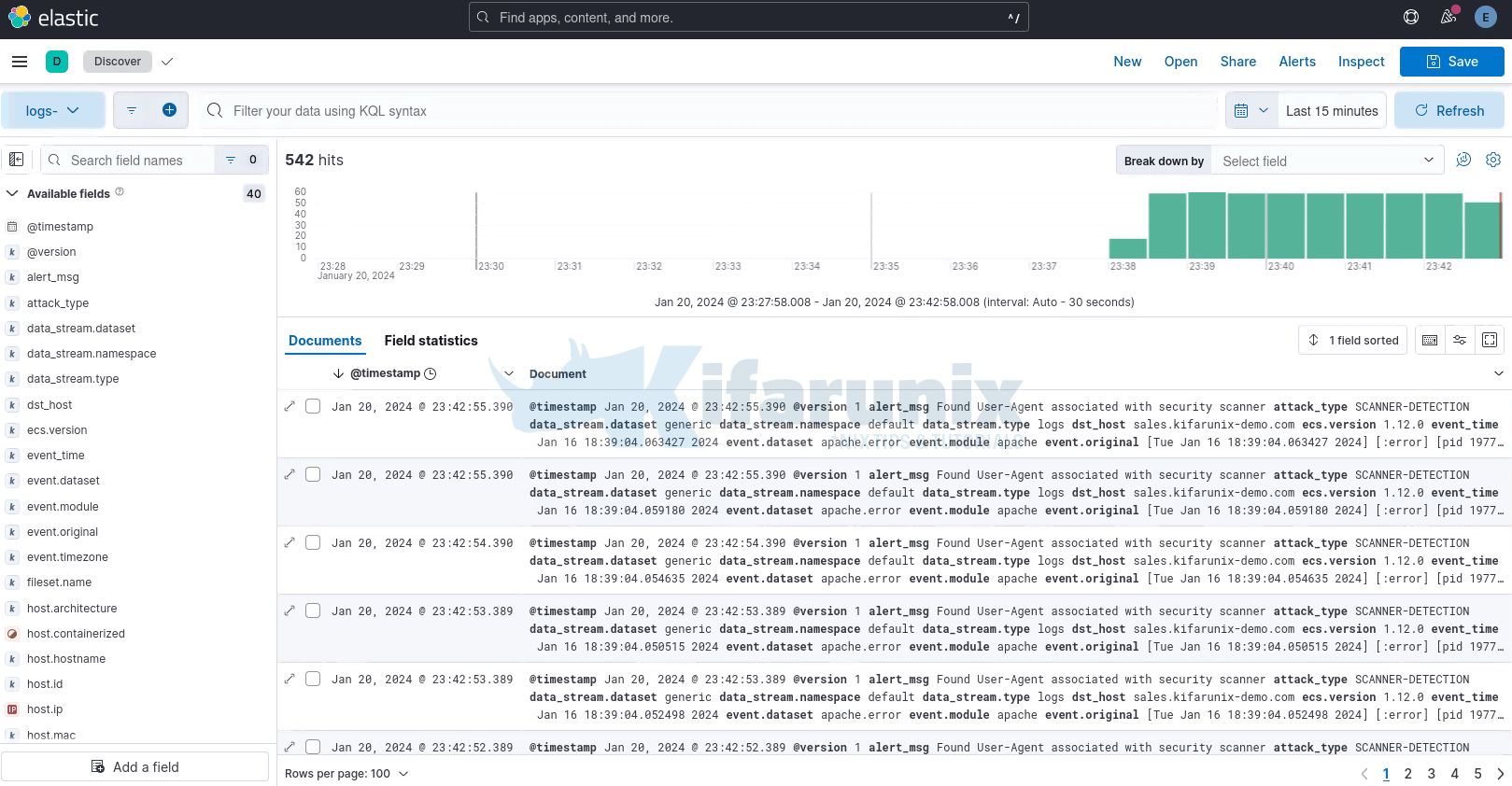

Once you forward data to your Logstash container, the next thing you need to do is create Kibana index.

Open the menu, then go to Stack Management > Kibana > Index Patterns.

Once done, heading to Discover menu to view your data. You should now be able to see your Logstash custom fields populated.

That marks the end of our tutorial on how to deploy a single node Elastic Stack cluster on Docker Containers.

More tutorials;

Deploy ELK Stack 8 on Docker Containers

Configure Kibana Dashboards/Visualizations to use Custom Index