How to run ELK stack on Docker? In this tutorial, we are going to learn how to deploy a single node ELK stack cluster on Docker containers. Elastic 7.17.16 is the latest release on Elastic 7.x releases. ELK stack is a group of open source software projects: Elasticsearch, Logstash, and Kibana and Beats, where:

- Elasticsearch is a search and analytics engine

- Logstash is a server‑side data processing pipeline that ingests data from multiple sources simultaneously, transforms it, and then sends it to a “stash” like Elasticsearch

- Kibana lets users visualize data with charts and graphs in Elasticsearch and

- Beats are the data shippers. They ship system logs, network, infrastructure data, etc to either Logstash for further processing or Elasticsearch for indexing.

We will be running Elasticsearch 7.17.16, Logstash 7.17.16, Kibana 7.17.16, as Docker containers in this tutorial.

Kindly note that Elastic 7.x doesn’t support authentication nor TLS/SSL connection by default. If you want, you can use ELK stack 8.

Deploy ELK Stack 8 on Docker Containers

Table of Contents

Deploying Single Node ELK Stack Cluster on Docker Containers

In this tutorial, therefore, we will learn how to deploy ELK Stack cluster on Docker using Docker and Docker compose.

Docker is a platform that enables developers and system administrators to build, run, and share applications with containers. It provides command line interface tools such as docker and docker-compose that are used for managing Docker containers. While docker is a Docker cli for managing single Docker containers, docker-compose on the other hand is used for running and managing multiple Docker containers.

Install Docker Engine

Depending on the your host system distribution, you need to install the Docker engine. You can follow the links below to install Docker Engine on Ubuntu/Debian/CentOS 8.

Install and Use Docker on Linux

Checking Installed Docker version;

docker version

Sample output;

Docker version 24.0.7, build afdd53bInstall Docker Compose

For Docker compose to work, ensure that you have Docker Engine installed. You can follow the links above to install Docker Engine.

Once you have the Docker engine installed, proceed to install Docker compose.

Check the current stable release version of Docker Compose on their Github release page. As of this writing, the Docker Compose version 2.24.0 is the current stable release.

VER=2.24.0sudo curl -L "https://github.com/docker/compose/releases/download/v$VER/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-composeThis downloads docker compose tool to /usr/local/bin directory.

Make the Docker compose binary executable;

chmod +x /usr/local/bin/docker-composeYou should now be able to use Docker compose (docker-compose) on the CLI.

Check the version of installed Docker compose to confirm that it is working as expected.

docker-compose versionDocker Compose version v2.24.0Running Docker as Non-Root User

We are running both Docker and Docker compose as non root user. To be able to do this, ensure that your add your standard user to the docker group.

For example, am running this setup as user kifarunix. So, add the user to Docker group. Replace the username accordingly.

sudo usermod -aG docker kifarunix

Log out and log in again as the user that is added to the docker group and you should be able to run the docker and docker-compose CLI tools.

Important Elasticsearch Settings

Disable Memory Swapping on a Container

There are different ways to disable swapping on Elasticsearch. For an Elasticsearch Docker container, you can simply set the value of the bootstrap.memory_lock option to true. With this option, you also need to ensure that there is no maximum limit imposed on the locked-in-memory address space (LimitMEMLOCK=infinity). We will see how this can be achieved in a Docker compose file.

You can confirm the default value set on the Docker image using the command below;

docker run --rm docker.elastic.co/elasticsearch/elasticsearch:7.17.16 /bin/bash -c 'ulimit -Hm && ulimit -Sm'Sample output;

unlimited

unlimited

If the value, other than unlimited is printed, then you have to set this via the Docker compose file to ensure that it set to unlimited for both Soft and Hard limits.

For a respective service, this is how this config looks like in Docker compose file.

ulimits:

memlock:

soft: -1

hard: -1

Set JVM Heap Size on All Cluster Nodes

Elasticsearch usually sets the heap size automatically based on the roles of the nodes’s roles and the total memory available to the node’s container. This is the recommended approach! For the Elasticsearch Docker containers, any custom JVM settings can be defined using the ES_JAVA_OPTS environment variable, either in compose file or on command line. For example, you can set JVM heap size, Min and Max to 512M using the ES_JAVA_OPTS=-Xms512m -Xmx512m.

Set Maximum Open File Descriptor and Processes on Elasticsearch Containers

Set the maximum number of open files (nofile) for the containers to 65,536 and max number of processes to 4096 (both soft and hard limits).

We will also define these limits in the compose file. However, the Elasticsearch images usually comes with these limits already defined. You can use the command below to check the maximum number of user processes (-u) and max number of opens files (-n);

docker run --rm docker.elastic.co/elasticsearch/elasticsearch:7.17.16 /bin/bash -c 'ulimit -Hn && ulimit -Sn && ulimit -Hu && ulimit -Su'Sample output;

1048576

1048576

unlimited

unlimited

These values may look like this on Docker compose file. Adjust them accordingly!

ulimits:

nofile:

soft: 65536

hard: 65536

nproc:

soft: 2048

hard: 2048

Update Virtual Memory Settings on All Cluster Nodes

Elasticsearch uses a mmapfs directory by default to store its indices. To ensure that you do not run out of virtual memory, edit the /etc/sysctl.conf on the Docker host and update the value of vm.max_map_count as shown below.

vm.max_map_count=262144On the Docker host, simply run the command below to configure virtual memory settings.

echo "vm.max_map_count=262144" >> /etc/sysctl.confTo apply the changes;

sysctl -pDeploying ELK Stack Cluster on Docker Using Docker Compose

In this setup, we will deploy a single node Elastic Stack cluster with all the three components, Elasticsearch, Logstash and Kibana containers running on the same host as Docker containers.

If you are running Elastic stack on Docker containers for development or testing purposes, then you can run each component Docker container using docker run commands.

To begin, create a parent directory from where you will build your stack from.

mkdir $HOME/elastic-docker

Create Docker Compose file for Deploying Elastic Stack

Docker compose is a tool for defining and running multi-container Docker applications. With Compose, you use a YAML file to configure your application’s services. Then, with a single command, you create and start all the services from your configuration.

Using Compose is basically a three-step process:

- Define your app’s environment with a

Dockerfileso it can be reproduced anywhere. - Define the services that make up your app in

docker-compose.ymlso they can be run together in an isolated environment. - Run

docker-compose upand Compose starts and runs your entire app

In this setup, we will build everything using a Docker Compose file.

Setup Docker Compose file for Elastic Stack

Now it is time to create the Docker Compose file for our deployment. Note, we will be using Docker images of the current stable release versions of the Elastic components, v7.17.16.

vim $HOME/elastic-docker/docker-compose.yml

version: '3.8'

services:

elasticsearch:

container_name: kifarunix-demo-es

image: docker.elastic.co/elasticsearch/elasticsearch:7.17.16

environment:

- node.name=kifarunix-demo-es

- cluster.name=es-docker-cluster

- discovery.type=single-node

- bootstrap.memory_lock=true

- "ES_JAVA_OPTS=-Xms1g -Xmx1g"

mem_limit: 2147483648

ulimits:

memlock:

soft: -1

hard: -1

volumes:

- es-data:/usr/share/elasticsearch/data

ports:

- 9200:9200

networks:

- elastic

kibana:

depends_on:

- elasticsearch

image: docker.elastic.co/kibana/kibana:7.17.16

container_name: kifarunix-demo-kibana

environment:

ELASTICSEARCH_URL: http://kifarunix-demo-es:9200

ELASTICSEARCH_HOSTS: http://kifarunix-demo-es:9200

mem_limit: 1073741824

ports:

- 5601:5601

networks:

- elastic

logstash:

depends_on:

- elasticsearch

image: docker.elastic.co/logstash/logstash:7.17.16

container_name: kifarunix-demo-ls

ports:

- "5044:5044"

mem_limit: 2147483648

volumes:

- ./logstash/conf.d/:/usr/share/logstash/pipeline/:ro

networks:

- elastic

volumes:

es-data:

driver: local

networks:

elastic:

driver: bridge

For a complete description of all the Docker compose configuration options, refer to Docker compose reference page.

You can define all the values that are dynamic using environment variables in a .env file. This environment variables file should reside in the same directory are compose file.

Define Logstash Data Processing Pipeline

In this setup, we will configure Logstash to receive event data from Beats (Filebeat to be specific) for further processing and stashing onto the search analytics engine, Elasticsearch.

Note that Logstash is only necessary if you need to apply further processing to your event data. For example, extracting custom fields from the event data, mutating the event data etc. Otherwise, you can push the data directly to Elasticsearch from Beats.

In this setup, we will use a sample Logstash processing pipeline for ModSecurity audit logs;

mkdir -p $HOME/elastic-docker/logstash/conf.d

vim $HOME/elastic-docker/logstash/conf.d/modsec.conf

input {

beats {

port => 5044

}

}

filter {

# Extract event time, log severity level, source of attack (client), and the alert message.

grok {

match => { "message" => "(?<event_time>%{MONTH}\s%{MONTHDAY}\s%{TIME}\s%{YEAR})\] \[\:%{LOGLEVEL:log_level}.*client\s%{IPORHOST:src_ip}:\d+]\s(?<alert_message>.*)" }

}

# Extract Rules File from Alert Message

grok {

match => { "alert_message" => "(?<rulesfile>\[file \"(/.+.conf)\"\])" }

}

grok {

match => { "rulesfile" => "(?<rules_file>/.+.conf)" }

}

# Extract Attack Type from Rules File

grok {

match => { "rulesfile" => "(?<attack_type>[A-Z]+-[A-Z][^.]+)" }

}

# Extract Rule ID from Alert Message

grok {

match => { "alert_message" => "(?<ruleid>\[id \"(\d+)\"\])" }

}

grok {

match => { "ruleid" => "(?<rule_id>\d+)" }

}

# Extract Attack Message (msg) from Alert Message

grok {

match => { "alert_message" => "(?<msg>\[msg \S(.*?)\"\])" }

}

grok {

match => { "msg" => "(?<alert_msg>\"(.*?)\")" }

}

# Extract the User/Scanner Agent from Alert Message

grok {

match => { "alert_message" => "(?<scanner>User-Agent' \SValue: `(.*?)')" }

}

grok {

match => { "scanner" => "(?<user_agent>:(.*?)\')" }

}

grok {

match => { "alert_message" => "(?<agent>User-Agent: (.*?)\')" }

}

grok {

match => { "agent" => "(?<user_agent>: (.*?)\')" }

}

# Extract the Target Host

grok {

match => { "alert_message" => "(hostname \"%{IPORHOST:dst_host})" }

}

# Extract the Request URI

grok {

match => { "alert_message" => "(uri \"%{URIPATH:request_uri})" }

}

grok {

match => { "alert_message" => "(?<ref>referer: (.*))" }

}

grok {

match => { "ref" => "(?<referer> (.*))" }

}

mutate {

# Remove unnecessary characters from the fields.

gsub => [

"alert_msg", "[\"]", "",

"user_agent", "[:\"'`]", "",

"user_agent", "^\s*", "",

"referer", "^\s*", ""

]

# Remove the Unnecessary fields so we can only remain with

# General message, rules_file, attack_type, rule_id, alert_msg, user_agent, hostname (being attacked), Request URI and Referer.

remove_field => [ "alert_message", "rulesfile", "ruleid", "msg", "scanner", "agent", "ref" ]

}

}

output {

elasticsearch {

hosts => ["kifarunix-demo-es:9200"]

}

}

Check Docker Compose file Syntax;

docker-compose -f docker-compose.yml config

If there is any error, it will be printed. Otherwise, the Docker compose file contents are printed to standard output.

If you are in the same directory where docker-compose.yml file is located, simply run;

docker-compose config

Deploy Elastic Stack Using Docker Compose file

Everything is now setup and we are ready to build and start our Elastic Stack instances using the docker-compose up command.

Navigate to the main directory where the Docker compose file is located. In my setup the directory is $HOME/elastic-docker.

cd $HOME/elastic-docker

docker-compose up

The command creates and starts the containers in foreground.

Sample output;

...

kifarunix-demo-es | {"type": "server", "timestamp": "2024-01-15T19:35:00,516Z", "level": "INFO", "component": "o.e.c.m.MetadataIndexTemplateService", "cluster.name": "es-docker-cluster", "node.name": "kifarunix-demo-es", "message": "adding template [.monitoring-alerts-7] for index patterns [.monitoring-alerts-7]", "cluster.uuid": "DBy4Mwk-TB2Jum_AWDY0jw", "node.id": "4Fb4-CZ0QhG2KcZL79-8cw" }

kifarunix-demo-ls | [2024-01-15T19:35:00,590][INFO ][logstash.inputs.beats ][main] Beats inputs: Starting input listener {:address=>"0.0.0.0:5044"}

kifarunix-demo-ls | [2024-01-15T19:35:00,606][INFO ][logstash.javapipeline ][main] Pipeline started {"pipeline.id"=>"main"}

kifarunix-demo-ls | [2024-01-15T19:35:00,672][INFO ][logstash.agent ] Pipelines running {:count=>1, :running_pipelines=>[:main], :non_running_pipelines=>[]}

kifarunix-demo-ls | [2024-01-15T19:35:00,730][INFO ][org.logstash.beats.Server][main][ee92a68a4dc1b148e25ac3c899680db31f95563138e922a364e18e3dc052d084] Starting server on port: 5044

kifarunix-demo-ls | [2024-01-15T19:35:00,947][INFO ][logstash.agent ] Successfully started Logstash API endpoint {:port=>9600}

...

...

kifarunix-demo-kibana | {"type":"log","@timestamp":"2024-01-15T19:36:49Z","tags":["status","plugin:[email protected]","info"],"pid":8,"state":"green","message":"Status changed from uninitialized to green - Ready","prevState":"uninitialized","prevMsg":"uninitialized"}

kifarunix-demo-kibana | {"type":"log","@timestamp":"2024-01-15T19:36:49Z","tags":["listening","info"],"pid":8,"message":"Server running at http://0:5601"}

kifarunix-demo-es | {"type": "server", "timestamp": "2024-01-15T19:36:49,957Z", "level": "INFO", "component": "o.e.c.m.MetadataMappingService", "cluster.name": "es-docker-cluster", "node.name": "kifarunix-demo-es", "message": "[.kibana_task_manager_1/a3B8lwzxQjiNHtFEAExOaQ] update_mapping [_doc]", "cluster.uuid": "DBy4Mwk-TB2Jum_AWDY0jw", "node.id": "4Fb4-CZ0QhG2KcZL79-8cw" }

kifarunix-demo-kibana | {"type":"log","@timestamp":"2024-01-15T19:36:50Z","tags":["info","http","server","Kibana"],"pid":8,"message":"http server running at http://0:5601"}

...

When you stop the docker-compose up command, all containers are stopped.

From another console, you can check running containers. Note that you can user docker-compose command as you would docker command.

docker-compose ps

Name Command State Ports

-------------------------------------------------------------------------------------------------

kifarunix-demo-es /tini -- /usr/local/bin/do ... Up 0.0.0.0:9200->9200/tcp, 9300/tcp

kifarunix-demo-kibana /usr/local/bin/dumb-init - ... Up 0.0.0.0:5601->5601/tcp

kifarunix-demo-ls /usr/local/bin/docker-entr ... Up 0.0.0.0:5044->5044/tcp, 9600/tcp

From the output, you can see that the containers are running and their ports exposed on the host (any IP address) to allow external access.

You can run the stack containers in background using the -d option. You can press ctrl+c to cancel the command and stop the containers.

To relaunch containers in background

docker-compose up -d

Starting kifarunix-demo-ls ... done

Starting kifarunix-demo-es ... done

Starting kifarunix-demo-kibana ... done

You can as well list the running containers using docker command;

docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

130eec8df661 docker.elastic.co/kibana/kibana:7.17.16 "/usr/local/bin/dumb…" 38 minutes ago Up About a minute 0.0.0.0:5601->5601/tcp kifarunix-demo-kibana

6648df61c44b docker.elastic.co/logstash/logstash:7.17.16 "/usr/local/bin/dock…" 41 minutes ago Up About a minute 0.0.0.0:5044->5044/tcp, 9600/tcp kifarunix-demo-ls

db9936abbee2 docker.elastic.co/elasticsearch/elasticsearch:7.17.16 "/tini -- /usr/local…" 41 minutes ago Up About a minute 0.0.0.0:9200->9200/tcp, 9300/tcp kifarunix-demo-es

To find the details of each container, use docker inspect <container-name> command. For example

docker inspect kifarunix-demo-es

To get the logs of a container, use the command docker logs [OPTIONS] CONTAINER. For example, to get Elasticsearch container logs;

docker logs kifarunix-demo-es

If you need to check specific number of logs, you can use the tail option. E.g to get the last 50 log lines;

docker logs --tail 50 -f kifarunix-demo-es

Accessing Kibana Container from Browser

Once the stack is up and running, you can access Kibana externally using the host IP address and the port on which it is exposed on. In our setup, Kibana container port 5601 is exposed on the same port on the host;

docker port kifarunix-demo-kibana

5601/tcp -> 0.0.0.0:5601

This means that you can access Kibana container port on via any interface on the host, port 5601. Similarly, you can check container port exposure using the command above.

Therefore, you can access Kibana using your Container host address, http://<IP-Address>:5601.

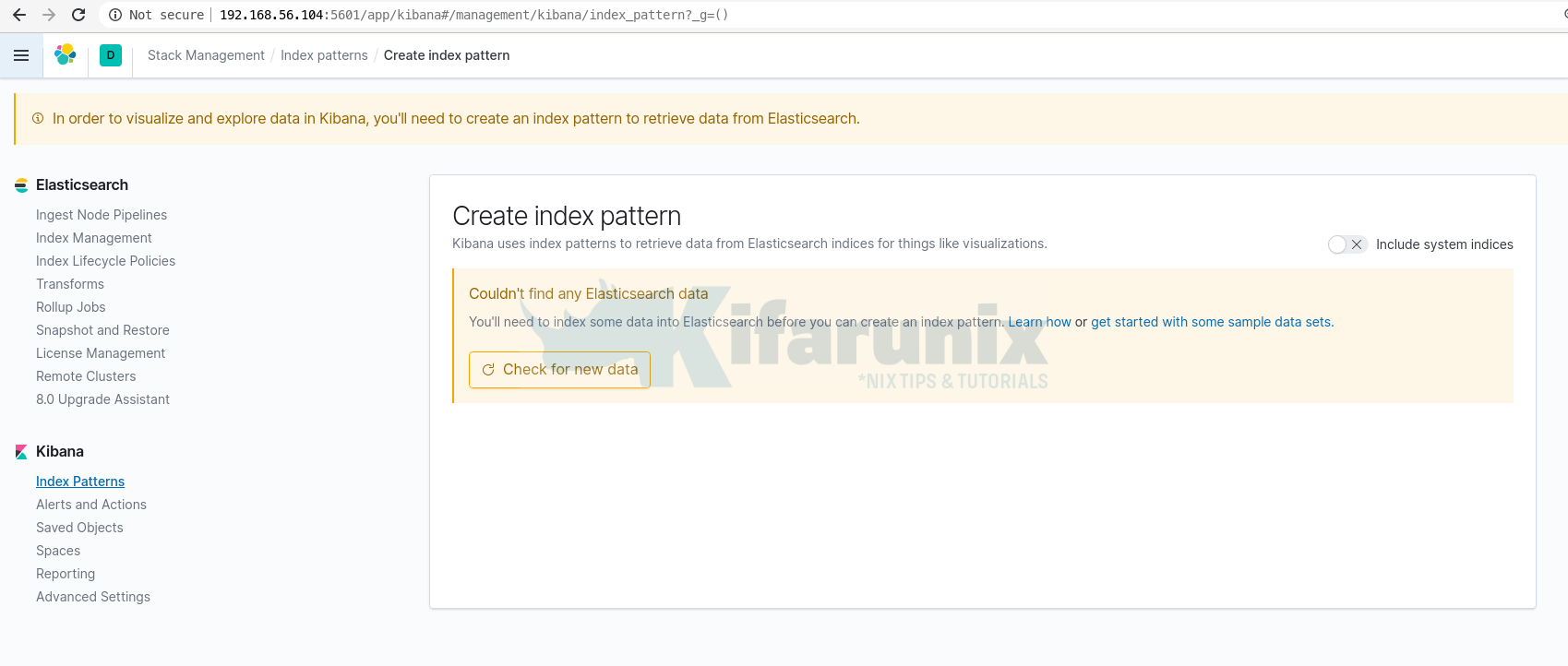

As you can see, we do not have any data yet in our stack.

Sending data to Elastic Stack

Since we configured our Logstash receive event data from the Beats, we will configure Filebeat to forward events.

We already covered how to install and configure Filebeat to forward event data in our previous guides;

Install and Configure Filebeat on CentOS 8

Install Filebeat on Fedora 30/Fedora 29/CentOS 7

Install and Configure Filebeat 7 on Ubuntu 18.04/Debian 9.8

Once you forward data to your Logstash container, the next thing you need to do is create Kibana index.

Open the menu, then go to Stack Management > Kibana > Index Patterns.

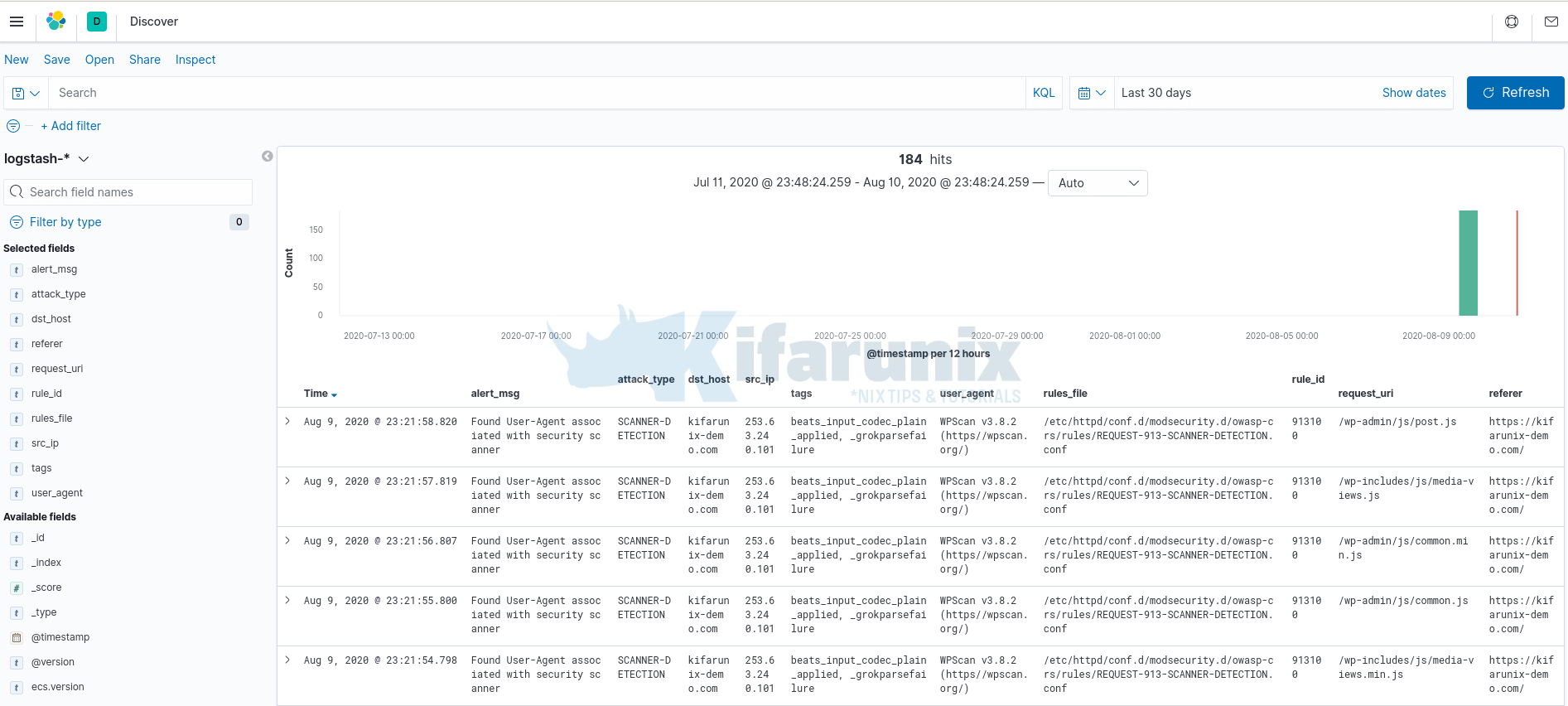

Once done, heading to Discover menu to view your data. You should now be able to see your Logstash custom fields populated.

That marks the end of our tutorial on how to deploy a single node Elastic Stack cluster on Docker Containers.

Reference

Running Elastic Stack on Docker

Other Related Tutorials

Process and Visualize ModSecurity Logs on ELK Stack

Create Kibana Visualization Dashboards for ModSecurity Logs

Deploy All-In-One OpenStack with Kolla-Ansible on Ubuntu 18.04