In this tutorial, you will learn how to deploy Filebeat using Ansible. Ansible is an open-source automation tool used for configuration management, application deployment, and task automation. It is designed to simplify IT automation by providing a way to automate tasks across a large number of computers.

Table of Contents

Deploying Filebeat using Ansible

Example Environment

To demonstrate how Ansible can be used to deploy Filebeat on various Linux systems in an environment, we have four systems (managed nodes) we will deploy Filebeat on using Ansible;

| Linux Distribution | IP Address |

| Oracle Linux 9 | 192.168.56.152 |

| Rocky Linux 9 | 192.168.56.144 |

| Debian 11 | 192.168.56.153 |

| Ubuntu 22.04 | 192.168.56.142 |

We also have;

- ELK (8) server running on Ubuntu 22.04, IP address 192.168.56.124

- Ansible Control Node running on Ubuntu 22.04, IP address 192.168.56.10

Install Ansible on Linux

To begin with, you need to Ansible on your control node. A control node, in context of Ansible IT automation, is the machine where Ansible is installed and from which all the automation tasks are executed.

In this guide, we use an Ubuntu 22.04 node as our Ansible control node.

Thus, to install Ansible on Ubuntu 22.04, proceed as follows;

Run system update;

sudo apt updatesudo apt install python3-pip -ypython3 -m pip install --upgrade pipsudo python3 -m pip install ansibleEnable Ansible auto-completion;

sudo python3 -m pip install argcompleteConfirm the Ansible version;

ansible --version

ansible [core 2.14.3]

config file = None

configured module search path = ['/home/kifarunix/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/local/lib/python3.10/dist-packages/ansible

ansible collection location = /home/kifarunix/.ansible/collections:/usr/share/ansible/collections

executable location = /usr/local/bin/ansible

python version = 3.10.6 (main, Nov 14 2022, 16:10:14) [GCC 11.3.0] (/usr/bin/python3)

jinja version = 3.0.3

libyaml = True

Create Ansible User on Managed Nodes

Ansible uses the user you are logged in on control node as to connect to all remote devices.

If the user doesn’t exists on the remote hosts, you need to create the account.

So, on all Ansible remotely managed hosts, create an account with sudo rights. This is the only manual task you might need to do at the beggining, -:).

On Debian based systems;

sudo useradd -G sudo -s /bin/bash -m kifadminOn RHEL based systems;

sudo useradd -G wheel -s /bin/bash -m kifadminSet the account password;

sudo passwd kifadminSetup Ansible SSH Keys

When using Ansible, you have two options for authenticating with remote systems:

- SSH keys or

- password authentication

SSH keys authentication is more preferred as a method of connection and is considered more secure and convenient compared to password authentication.

Therefore, on the Ansible control node, you need to generate a public and private key pair and then copy the public key to the remote system’s authorized keys file.

Thus, as a non root user, on the control node, run the command below to generate SSH key pair. It is recommended to use SSH keys with a passphrase for increased security.

ssh-keygenSample output;

Generating public/private rsa key pair.

Enter file in which to save the key (/home/kifarunix/.ssh/id_rsa): /home/kifarunix/.ssh/id_ansible_rsa

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/kifarunix/.ssh/id_ansible_rsa

Your public key has been saved in /home/kifarunix/.ssh/id_ansible_rsa.pub

The key fingerprint is:

SHA256:lXmQC0UVM1bIpMeg1t0L0tofaLRPSEW65RnO7hVGcsQ kifarunix@control-node

The key's randomart image is:

+---[RSA 3072]----+

| o==B==. |

| .o.@o*.E |

| o.B.@.+o |

| . ..O @++ |

| S . B Oo |

| . =...|

| + .|

| . . |

| . |

+----[SHA256]-----+

Note that we already had another SSH key pair stored on the default file, /home/kifarunix/.ssh/id_rsa used for other services. Hence, why we had to create the key pair on a different file, /home/kifarunix/.ssh/id_ansible_rsa.

In Ansible configuration file, you can define custom path to SSH key using the private_key_file option.

Copy Ansible SSH Public Key to Managed Nodes

Once you have the SSH key pair generated, proceed to copy the key to the user accounts you will use on managed nodes for Ansible deployment tasks;

for i in 142 144 152 153; do ssh-copy-id [email protected].$i; doneIf you are using non default SSH key file, specify the path to the key using the -i option;

for i in 142 144 152 153; do ssh-copy-id -i /home/kifarunix/.ssh/id_ansible_rsa [email protected].$i; doneCreate Ansible Configuration Directory

By default, /etc/ansible is a default Ansible directory.

If you install Ansible via PIP, chances are, default configuration for Ansible is not created.

Thus, to create you can create your custom configuration directory to easily control Ansible.

mkdir $HOME/ansibleSimilarly, create your custom Ansible configuration file, ansible.cfg.

This is how a default Ansible configuration looks like by default;

cat /etc/ansible/ansible.cfg

# config file for ansible -- https://ansible.com/

# ===============================================

# nearly all parameters can be overridden in ansible-playbook

# or with command line flags. ansible will read ANSIBLE_CONFIG,

# ansible.cfg in the current working directory, .ansible.cfg in

# the home directory or /etc/ansible/ansible.cfg, whichever it

# finds first

[defaults]

# some basic default values...

#inventory = /etc/ansible/hosts

#library = /usr/share/my_modules/

#module_utils = /usr/share/my_module_utils/

#remote_tmp = ~/.ansible/tmp

#local_tmp = ~/.ansible/tmp

#plugin_filters_cfg = /etc/ansible/plugin_filters.yml

#forks = 5

#poll_interval = 15

#sudo_user = root

#ask_sudo_pass = True

#ask_pass = True

#transport = smart

#remote_port = 22

#module_lang = C

#module_set_locale = False

# plays will gather facts by default, which contain information about

# the remote system.

#

# smart - gather by default, but don't regather if already gathered

# implicit - gather by default, turn off with gather_facts: False

# explicit - do not gather by default, must say gather_facts: True

#gathering = implicit

# This only affects the gathering done by a play's gather_facts directive,

# by default gathering retrieves all facts subsets

# all - gather all subsets

# network - gather min and network facts

# hardware - gather hardware facts (longest facts to retrieve)

# virtual - gather min and virtual facts

# facter - import facts from facter

# ohai - import facts from ohai

# You can combine them using comma (ex: network,virtual)

# You can negate them using ! (ex: !hardware,!facter,!ohai)

# A minimal set of facts is always gathered.

#gather_subset = all

# some hardware related facts are collected

# with a maximum timeout of 10 seconds. This

# option lets you increase or decrease that

# timeout to something more suitable for the

# environment.

# gather_timeout = 10

# Ansible facts are available inside the ansible_facts.* dictionary

# namespace. This setting maintains the behaviour which was the default prior

# to 2.5, duplicating these variables into the main namespace, each with a

# prefix of 'ansible_'.

# This variable is set to True by default for backwards compatibility. It

# will be changed to a default of 'False' in a future release.

# ansible_facts.

# inject_facts_as_vars = True

# additional paths to search for roles in, colon separated

#roles_path = /etc/ansible/roles

# uncomment this to disable SSH key host checking

#host_key_checking = False

# change the default callback, you can only have one 'stdout' type enabled at a time.

#stdout_callback = skippy

## Ansible ships with some plugins that require whitelisting,

## this is done to avoid running all of a type by default.

## These setting lists those that you want enabled for your system.

## Custom plugins should not need this unless plugin author specifies it.

# enable callback plugins, they can output to stdout but cannot be 'stdout' type.

#callback_whitelist = timer, mail

# Determine whether includes in tasks and handlers are "static" by

# default. As of 2.0, includes are dynamic by default. Setting these

# values to True will make includes behave more like they did in the

# 1.x versions.

#task_includes_static = False

#handler_includes_static = False

# Controls if a missing handler for a notification event is an error or a warning

#error_on_missing_handler = True

# change this for alternative sudo implementations

#sudo_exe = sudo

# What flags to pass to sudo

# WARNING: leaving out the defaults might create unexpected behaviours

#sudo_flags = -H -S -n

# SSH timeout

#timeout = 10

# default user to use for playbooks if user is not specified

# (/usr/bin/ansible will use current user as default)

#remote_user = root

# logging is off by default unless this path is defined

# if so defined, consider logrotate

#log_path = /var/log/ansible.log

# default module name for /usr/bin/ansible

#module_name = command

# use this shell for commands executed under sudo

# you may need to change this to bin/bash in rare instances

# if sudo is constrained

#executable = /bin/sh

# if inventory variables overlap, does the higher precedence one win

# or are hash values merged together? The default is 'replace' but

# this can also be set to 'merge'.

#hash_behaviour = replace

# by default, variables from roles will be visible in the global variable

# scope. To prevent this, the following option can be enabled, and only

# tasks and handlers within the role will see the variables there

#private_role_vars = yes

# list any Jinja2 extensions to enable here:

#jinja2_extensions = jinja2.ext.do,jinja2.ext.i18n

# if set, always use this private key file for authentication, same as

# if passing --private-key to ansible or ansible-playbook

#private_key_file = /path/to/file

# If set, configures the path to the Vault password file as an alternative to

# specifying --vault-password-file on the command line.

#vault_password_file = /path/to/vault_password_file

# format of string {{ ansible_managed }} available within Jinja2

# templates indicates to users editing templates files will be replaced.

# replacing {file}, {host} and {uid} and strftime codes with proper values.

#ansible_managed = Ansible managed: {file} modified on %Y-%m-%d %H:%M:%S by {uid} on {host}

# {file}, {host}, {uid}, and the timestamp can all interfere with idempotence

# in some situations so the default is a static string:

#ansible_managed = Ansible managed

# by default, ansible-playbook will display "Skipping [host]" if it determines a task

# should not be run on a host. Set this to "False" if you don't want to see these "Skipping"

# messages. NOTE: the task header will still be shown regardless of whether or not the

# task is skipped.

#display_skipped_hosts = True

# by default, if a task in a playbook does not include a name: field then

# ansible-playbook will construct a header that includes the task's action but

# not the task's args. This is a security feature because ansible cannot know

# if the *module* considers an argument to be no_log at the time that the

# header is printed. If your environment doesn't have a problem securing

# stdout from ansible-playbook (or you have manually specified no_log in your

# playbook on all of the tasks where you have secret information) then you can

# safely set this to True to get more informative messages.

#display_args_to_stdout = False

# by default (as of 1.3), Ansible will raise errors when attempting to dereference

# Jinja2 variables that are not set in templates or action lines. Uncomment this line

# to revert the behavior to pre-1.3.

#error_on_undefined_vars = False

# by default (as of 1.6), Ansible may display warnings based on the configuration of the

# system running ansible itself. This may include warnings about 3rd party packages or

# other conditions that should be resolved if possible.

# to disable these warnings, set the following value to False:

#system_warnings = True

# by default (as of 1.4), Ansible may display deprecation warnings for language

# features that should no longer be used and will be removed in future versions.

# to disable these warnings, set the following value to False:

#deprecation_warnings = True

# (as of 1.8), Ansible can optionally warn when usage of the shell and

# command module appear to be simplified by using a default Ansible module

# instead. These warnings can be silenced by adjusting the following

# setting or adding warn=yes or warn=no to the end of the command line

# parameter string. This will for example suggest using the git module

# instead of shelling out to the git command.

# command_warnings = False

# set plugin path directories here, separate with colons

#action_plugins = /usr/share/ansible/plugins/action

#become_plugins = /usr/share/ansible/plugins/become

#cache_plugins = /usr/share/ansible/plugins/cache

#callback_plugins = /usr/share/ansible/plugins/callback

#connection_plugins = /usr/share/ansible/plugins/connection

#lookup_plugins = /usr/share/ansible/plugins/lookup

#inventory_plugins = /usr/share/ansible/plugins/inventory

#vars_plugins = /usr/share/ansible/plugins/vars

#filter_plugins = /usr/share/ansible/plugins/filter

#test_plugins = /usr/share/ansible/plugins/test

#terminal_plugins = /usr/share/ansible/plugins/terminal

#strategy_plugins = /usr/share/ansible/plugins/strategy

# by default, ansible will use the 'linear' strategy but you may want to try

# another one

#strategy = free

# by default callbacks are not loaded for /bin/ansible, enable this if you

# want, for example, a notification or logging callback to also apply to

# /bin/ansible runs

#bin_ansible_callbacks = False

# don't like cows? that's unfortunate.

# set to 1 if you don't want cowsay support or export ANSIBLE_NOCOWS=1

#nocows = 1

# set which cowsay stencil you'd like to use by default. When set to 'random',

# a random stencil will be selected for each task. The selection will be filtered

# against the `cow_whitelist` option below.

#cow_selection = default

#cow_selection = random

# when using the 'random' option for cowsay, stencils will be restricted to this list.

# it should be formatted as a comma-separated list with no spaces between names.

# NOTE: line continuations here are for formatting purposes only, as the INI parser

# in python does not support them.

#cow_whitelist=bud-frogs,bunny,cheese,daemon,default,dragon,elephant-in-snake,elephant,eyes,\

# hellokitty,kitty,luke-koala,meow,milk,moofasa,moose,ren,sheep,small,stegosaurus,\

# stimpy,supermilker,three-eyes,turkey,turtle,tux,udder,vader-koala,vader,www

# don't like colors either?

# set to 1 if you don't want colors, or export ANSIBLE_NOCOLOR=1

#nocolor = 1

# if set to a persistent type (not 'memory', for example 'redis') fact values

# from previous runs in Ansible will be stored. This may be useful when

# wanting to use, for example, IP information from one group of servers

# without having to talk to them in the same playbook run to get their

# current IP information.

#fact_caching = memory

#This option tells Ansible where to cache facts. The value is plugin dependent.

#For the jsonfile plugin, it should be a path to a local directory.

#For the redis plugin, the value is a host:port:database triplet: fact_caching_connection = localhost:6379:0

#fact_caching_connection=/tmp

# retry files

# When a playbook fails a .retry file can be created that will be placed in ~/

# You can enable this feature by setting retry_files_enabled to True

# and you can change the location of the files by setting retry_files_save_path

#retry_files_enabled = False

#retry_files_save_path = ~/.ansible-retry

# squash actions

# Ansible can optimise actions that call modules with list parameters

# when looping. Instead of calling the module once per with_ item, the

# module is called once with all items at once. Currently this only works

# under limited circumstances, and only with parameters named 'name'.

#squash_actions = apk,apt,dnf,homebrew,pacman,pkgng,yum,zypper

# prevents logging of task data, off by default

#no_log = False

# prevents logging of tasks, but only on the targets, data is still logged on the master/controller

#no_target_syslog = False

# controls whether Ansible will raise an error or warning if a task has no

# choice but to create world readable temporary files to execute a module on

# the remote machine. This option is False by default for security. Users may

# turn this on to have behaviour more like Ansible prior to 2.1.x. See

# https://docs.ansible.com/ansible/become.html#becoming-an-unprivileged-user

# for more secure ways to fix this than enabling this option.

#allow_world_readable_tmpfiles = False

# controls the compression level of variables sent to

# worker processes. At the default of 0, no compression

# is used. This value must be an integer from 0 to 9.

#var_compression_level = 9

# controls what compression method is used for new-style ansible modules when

# they are sent to the remote system. The compression types depend on having

# support compiled into both the controller's python and the client's python.

# The names should match with the python Zipfile compression types:

# * ZIP_STORED (no compression. available everywhere)

# * ZIP_DEFLATED (uses zlib, the default)

# These values may be set per host via the ansible_module_compression inventory

# variable

#module_compression = 'ZIP_DEFLATED'

# This controls the cutoff point (in bytes) on --diff for files

# set to 0 for unlimited (RAM may suffer!).

#max_diff_size = 1048576

# This controls how ansible handles multiple --tags and --skip-tags arguments

# on the CLI. If this is True then multiple arguments are merged together. If

# it is False, then the last specified argument is used and the others are ignored.

# This option will be removed in 2.8.

#merge_multiple_cli_flags = True

# Controls showing custom stats at the end, off by default

#show_custom_stats = True

# Controls which files to ignore when using a directory as inventory with

# possibly multiple sources (both static and dynamic)

#inventory_ignore_extensions = ~, .orig, .bak, .ini, .cfg, .retry, .pyc, .pyo

# This family of modules use an alternative execution path optimized for network appliances

# only update this setting if you know how this works, otherwise it can break module execution

#network_group_modules=eos, nxos, ios, iosxr, junos, vyos

# When enabled, this option allows lookups (via variables like {{lookup('foo')}} or when used as

# a loop with `with_foo`) to return data that is not marked "unsafe". This means the data may contain

# jinja2 templating language which will be run through the templating engine.

# ENABLING THIS COULD BE A SECURITY RISK

#allow_unsafe_lookups = False

# set default errors for all plays

#any_errors_fatal = False

[inventory]

# enable inventory plugins, default: 'host_list', 'script', 'auto', 'yaml', 'ini', 'toml'

#enable_plugins = host_list, virtualbox, yaml, constructed

# ignore these extensions when parsing a directory as inventory source

#ignore_extensions = .pyc, .pyo, .swp, .bak, ~, .rpm, .md, .txt, ~, .orig, .ini, .cfg, .retry

# ignore files matching these patterns when parsing a directory as inventory source

#ignore_patterns=

# If 'true' unparsed inventory sources become fatal errors, they are warnings otherwise.

#unparsed_is_failed=False

[privilege_escalation]

#become=True

#become_method=sudo

#become_user=root

#become_ask_pass=False

[paramiko_connection]

# uncomment this line to cause the paramiko connection plugin to not record new host

# keys encountered. Increases performance on new host additions. Setting works independently of the

# host key checking setting above.

#record_host_keys=False

# by default, Ansible requests a pseudo-terminal for commands executed under sudo. Uncomment this

# line to disable this behaviour.

#pty=False

# paramiko will default to looking for SSH keys initially when trying to

# authenticate to remote devices. This is a problem for some network devices

# that close the connection after a key failure. Uncomment this line to

# disable the Paramiko look for keys function

#look_for_keys = False

# When using persistent connections with Paramiko, the connection runs in a

# background process. If the host doesn't already have a valid SSH key, by

# default Ansible will prompt to add the host key. This will cause connections

# running in background processes to fail. Uncomment this line to have

# Paramiko automatically add host keys.

#host_key_auto_add = True

[ssh_connection]

# ssh arguments to use

# Leaving off ControlPersist will result in poor performance, so use

# paramiko on older platforms rather than removing it, -C controls compression use

#ssh_args = -C -o ControlMaster=auto -o ControlPersist=60s

# The base directory for the ControlPath sockets.

# This is the "%(directory)s" in the control_path option

#

# Example:

# control_path_dir = /tmp/.ansible/cp

#control_path_dir = ~/.ansible/cp

# The path to use for the ControlPath sockets. This defaults to a hashed string of the hostname,

# port and username (empty string in the config). The hash mitigates a common problem users

# found with long hostnames and the conventional %(directory)s/ansible-ssh-%%h-%%p-%%r format.

# In those cases, a "too long for Unix domain socket" ssh error would occur.

#

# Example:

# control_path = %(directory)s/%%h-%%r

#control_path =

# Enabling pipelining reduces the number of SSH operations required to

# execute a module on the remote server. This can result in a significant

# performance improvement when enabled, however when using "sudo:" you must

# first disable 'requiretty' in /etc/sudoers

#

# By default, this option is disabled to preserve compatibility with

# sudoers configurations that have requiretty (the default on many distros).

#

#pipelining = False

# Control the mechanism for transferring files (old)

# * smart = try sftp and then try scp [default]

# * True = use scp only

# * False = use sftp only

#scp_if_ssh = smart

# Control the mechanism for transferring files (new)

# If set, this will override the scp_if_ssh option

# * sftp = use sftp to transfer files

# * scp = use scp to transfer files

# * piped = use 'dd' over SSH to transfer files

# * smart = try sftp, scp, and piped, in that order [default]

#transfer_method = smart

# if False, sftp will not use batch mode to transfer files. This may cause some

# types of file transfer failures impossible to catch however, and should

# only be disabled if your sftp version has problems with batch mode

#sftp_batch_mode = False

# The -tt argument is passed to ssh when pipelining is not enabled because sudo

# requires a tty by default.

#usetty = True

# Number of times to retry an SSH connection to a host, in case of UNREACHABLE.

# For each retry attempt, there is an exponential backoff,

# so after the first attempt there is 1s wait, then 2s, 4s etc. up to 30s (max).

#retries = 3

[persistent_connection]

# Configures the persistent connection timeout value in seconds. This value is

# how long the persistent connection will remain idle before it is destroyed.

# If the connection doesn't receive a request before the timeout value

# expires, the connection is shutdown. The default value is 30 seconds.

#connect_timeout = 30

# The command timeout value defines the amount of time to wait for a command

# or RPC call before timing out. The value for the command timeout must

# be less than the value of the persistent connection idle timeout (connect_timeout)

# The default value is 30 second.

#command_timeout = 30

[accelerate]

#accelerate_port = 5099

#accelerate_timeout = 30

#accelerate_connect_timeout = 5.0

# The daemon timeout is measured in minutes. This time is measured

# from the last activity to the accelerate daemon.

#accelerate_daemon_timeout = 30

# If set to yes, accelerate_multi_key will allow multiple

# private keys to be uploaded to it, though each user must

# have access to the system via SSH to add a new key. The default

# is "no".

#accelerate_multi_key = yes

[selinux]

# file systems that require special treatment when dealing with security context

# the default behaviour that copies the existing context or uses the user default

# needs to be changed to use the file system dependent context.

#special_context_filesystems=nfs,vboxsf,fuse,ramfs,9p,vfat

# Set this to yes to allow libvirt_lxc connections to work without SELinux.

#libvirt_lxc_noseclabel = yes

[colors]

#highlight = white

#verbose = blue

#warn = bright purple

#error = red

#debug = dark gray

#deprecate = purple

#skip = cyan

#unreachable = red

#ok = green

#changed = yellow

#diff_add = green

#diff_remove = red

#diff_lines = cyan

[diff]

# Always print diff when running ( same as always running with -D/--diff )

# always = no

# Set how many context lines to show in diff

# context = 3

Based on the default config, we will create our own configuration file;

vim $HOME/ansible/ansible.cfgIn the configuration file, we will define some basic values;

[defaults]

inventory = /home/kifarunix/ansible/hosts

roles_path = /home/kifarunix/ansible/roles

private_key_file = /home/kifarunix/.ssh/id_ansible_rsa

interpreter_python = /usr/bin/python3

...

Whenever you use a custom Ansible configuration directory, you can specify it using ANSIBLE_CONFIG environment variable or -c command-line option;

Let’s set the environment variable to make it easy;

echo "export ANSIBLE_CONFIG=$HOME/ansible/ansible.cfg" >> $HOME/.bashrcsource $HOME/.bashrcWith this, you wont have to specify the path to Ansible configuration or path to any path that is defined on the custom configuration.

Create Ansible Inventory File

The inventory file contains a list of hosts and groups of hosts that are managed by Ansible. By default, inventory file is set to /etc/ansible/hosts. You can specify a different inventory file using the -i option or using the inventory option in the configuration file. We set out inventory file to /home/kifarunix/ansible/hosts in the configuration above;

vim /home/kifarunix/ansible/hosts[debian_nodes]

192.168.56.142

192.168.56.153

[rhel_nodes]

192.168.56.144

192.168.56.152Save and exit the file.

There are different formats of the hosts file configuration. That is just an example.

See below a sample hosts file!

cat /etc/ansible/hosts

# This is the default ansible 'hosts' file.

#

# It should live in /etc/ansible/hosts

#

# - Comments begin with the '#' character

# - Blank lines are ignored

# - Groups of hosts are delimited by [header] elements

# - You can enter hostnames or ip addresses

# - A hostname/ip can be a member of multiple groups

# Ex 1: Ungrouped hosts, specify before any group headers.

#green.example.com

#blue.example.com

#192.168.100.1

#192.168.100.10

# Ex 2: A collection of hosts belonging to the 'webservers' group

#[webservers]

#alpha.example.org

#beta.example.org

#192.168.1.100

#192.168.1.110

# If you have multiple hosts following a pattern you can specify

# them like this:

#www[001:006].example.com

# Ex 3: A collection of database servers in the 'dbservers' group

#[dbservers]

#

#db01.intranet.mydomain.net

#db02.intranet.mydomain.net

#10.25.1.56

#10.25.1.57

# Here's another example of host ranges, this time there are no

# leading 0s:

#db-[99:101]-node.example.com

Run Ansible Passphrase Protected SSH Key Without Prompting for Passphrase

We are trying to automate tasks here. However, if you setup passphrase protected SSH key, you will be prompted to enter the phrase for every single command to be ran against a managed node.

As a work around for this, you can use ssh-agent to cache your passphrase, so that you only need to enter it once per session as follows;

eval "$(ssh-agent -s)"ssh-add /home/kifarunix/.ssh/id_ansible_rsaYou will be prompted to enter your passphrase.

Test Connection to Ansible Managed Nodes

Now that you have setup SSH key authentication and an inventory of the managed hosts, run Ansible ping module to check hosts connection and aliveness;

ansible all -m ping -u kifadminSample output;

192.168.56.153 | SUCCESS => {

"changed": false,

"ping": "pong"

}

192.168.56.144 | SUCCESS => {

"changed": false,

"ping": "pong"

}

192.168.56.152 | SUCCESS => {

"changed": false,

"ping": "pong"

}

192.168.56.142 | SUCCESS => {

"changed": false,

"ping": "pong"

}

All seems good so far!

Create Filebeat Playbook Roles and Tasks

Ansible Playbooks define a set of tasks that needs to be executed on each managed host. Ansible roles consists of a collection of tasks.

This is our Ansible directory structure;

tree ~/ansible/

/home/kifarunix/ansible/

├── ansible.cfg

├── filebeat-configs

│ ├── elastic-ca.crt

│ └── filebeat.yml

├── hosts

├── main.yml

└── roles

└── filebeat

└── tasks

├── Debian.yml

├── main.yml

└── Rhel.yml

4 directories, 8 files

In this example setup, we will create a role by the name filebeat. As mentioned, a roles is made of various tasks.

Thus, let’s create a directory to work from.

mkdir -p ~/ansible/roles/filebeat/tasksAlso, create a directory to store custom filebeat configurations.

mkdir ~/ansible/filebeat-configs/So what tasks do we have in our Filebeat deployment?

We have tasks to;

- install Filebeat on Debian/Ubuntu systems

- install Filebeat on RHEL based systems (Rocky Linux/Oracle Linux)

Note that we are installing Filbeat 8.x since we are running ELK v8.5.2.

Create Ansible task to install Filebeat on Debian systems

vim ~/ansible/roles/filebeat/tasks/Debian.yml

---

- name: Install gnupg

apt:

name: gnupg2

state: present

update_cache: yes

- name: Install ELK APT Repo Signing Key

apt_key:

url: "{{ ELK_APT_REPO_KEY }}"

state: present

- name: Install ELK 8.x APT Repo

apt_repository:

repo: "{{ ELK_8_APT_REPO }}"

state: present

- name: Update Repo cache and install Filebeat

apt:

name: filebeat=8.5.2

update_cache: yes

Create Ansible task to install Filebeat on RHEL systems

vim ~/ansible/roles/filebeat/tasks/Rhel.yml

---

- name: Install ELK YUM Repo Signing Key

rpm_key:

key: "{{ ELK_YUM_REPO_KEY }}"

state: present

- name: Install ELK 8.x YUM Repo

yum_repository:

name: elastic-8.x

description: Elastic repository for 8.x packages

baseurl: "{{ ELK_8_YUM_REPO }}"

gpgcheck: yes

enabled: yes

- name: Update Repo cache and install Filebeat

yum:

name: filebeat-8.5.2

update_cache: yes

Create Main Ansible tasks to install Filebeat

vim ~/ansible/roles/filebeat/tasks/main.yml

---

- name: Debian/Ubuntu Filebeat Installation Task

include_tasks: "Debian.yml"

when: ansible_distribution in ['Debian', 'Ubuntu']

- name: RedHat/Rocky/CentOS Agent Installation Task

include_tasks: "Rhel.yml"

when: ansible_os_family == "RedHat"

- name: Enable Filebeat System Module

shell: |

filebeat modules enable system

sed -i 's/false/true/g' /etc/filebeat/modules.d/system.yml

when: ansible_distribution in ['Debian', 'Ubuntu'] or ansible_os_family == "RedHat"

- name: Backup existing Filebeat configuration file

copy:

src: /etc/filebeat/filebeat.yml

dest: /etc/filebeat/filebeat.yml.bak

remote_src: yes

backup: yes

when: ansible_distribution in ['Debian', 'Ubuntu'] or ansible_os_family == "RedHat"

- name: Copy custom Filebeat configuration file

copy:

src: /home/kifarunix/ansible/filebeat-configs/filebeat.yml

dest: /etc/filebeat/filebeat.yml

when: ansible_distribution in ['Debian', 'Ubuntu'] or ansible_os_family == "RedHat"

- name: Copy ELK CA Cert file

copy:

src: /home/kifarunix/ansible/filebeat-configs/elastic-ca.crt

dest: /etc/ssl/certs/elastic-ca.crt

when: ansible_distribution in ['Debian', 'Ubuntu'] or ansible_os_family == "RedHat"

- name: Update hosts file with ELK server address

lineinfile:

path: /etc/hosts

line: "{{ elk_ip }} {{ elk_hostname }} {{ elk_alias }}"

state: present

when: ansible_distribution in ['Debian', 'Ubuntu'] or ansible_os_family == "RedHat"

- name: Start and enable Filebeat Service

systemd:

name: filebeat

state: started

enabled: yes

when: ansible_distribution in ['Debian', 'Ubuntu'] or ansible_os_family == "RedHat"

The above is pretty much self-explanatory.

Create Main Ansible Playbook

vim ~/ansible/main.ymlHere, we define group of hosts to run the installation against, variables applied to the play, roles required to execute, user to run the roles with…

---

- hosts: all

gather_facts: True

vars:

elk_ip: "192.168.56.124"

elk_hostname: "elk.kifarunix.com"

elk_alias: "elk"

remote_user: kifadmin

become: yes

roles:

- filebeat

We also updated hosts file with respective OS family variables;

cat ~/ansible/hosts

[debian_nodes]

192.168.56.142

192.168.56.153

[rhel_nodes]

192.168.56.144

192.168.56.152

[debian_nodes:vars]

ELK_APT_REPO_KEY=https://artifacts.elastic.co/GPG-KEY-elasticsearch

ELK_8_APT_REPO=deb https://artifacts.elastic.co/packages/8.x/apt stable main

[rhel_nodes:vars]

ELK_YUM_REPO_KEY=https://packages.elastic.co/GPG-KEY-elasticsearch

ELK_8_YUM_REPO=https://artifacts.elastic.co/packages/8.x/yum

Use Ansible to Deploy Filebeat

First of all, let’s ensure we are not prompted for SSH key passphrase

eval "$(ssh-agent -s)"ssh-add /home/kifarunix/.ssh/id_ansible_rsaNext, run a dry run of your Ansible playbooks to check what would happen and fix and would be issue;

ansible-playbook ~/ansible/main.yml -C --ask-become-passSample output;

BECOME password:

PLAY [all] *****************************************************************************************************************************************************************

TASK [Gathering Facts] *****************************************************************************************************************************************************

ok: [192.168.56.144]

ok: [192.168.56.152]

ok: [192.168.56.142]

ok: [192.168.56.153]

TASK [filebeat : Debian/Ubuntu Filebeat Installation Task] *****************************************************************************************************************

skipping: [192.168.56.144]

skipping: [192.168.56.152]

included: /home/kifarunix/ansible/roles/filebeat/tasks/Debian.yml for 192.168.56.142, 192.168.56.153

TASK [filebeat : Install gnupg] ********************************************************************************************************************************************

changed: [192.168.56.142]

changed: [192.168.56.153]

TASK [filebeat : Install ELK APT Repo Signing Key] *************************************************************************************************************************

changed: [192.168.56.142]

changed: [192.168.56.153]

TASK [filebeat : Install ELK 8.x APT Repo] *********************************************************************************************************************************

changed: [192.168.56.153]

changed: [192.168.56.142]

TASK [filebeat : Update Repo cache and install Filebeat] *******************************************************************************************************************

fatal: [192.168.56.153]: FAILED! => {"changed": false, "msg": "No package matching 'filebeat' is available"}

fatal: [192.168.56.142]: FAILED! => {"changed": false, "msg": "No package matching 'filebeat' is available"}

TASK [filebeat : RedHat/Rocky/CentOS Agent Installation Task] **************************************************************************************************************

included: /home/kifarunix/ansible/roles/filebeat/tasks/Rhel.yml for 192.168.56.144, 192.168.56.152

TASK [filebeat : update-crypto-policies to import the key] *****************************************************************************************************************

skipping: [192.168.56.144]

skipping: [192.168.56.152]

TASK [filebeat : Install ELK YUM Repo Signing Key] *************************************************************************************************************************

changed: [192.168.56.144]

changed: [192.168.56.152]

TASK [filebeat : Install ELK 8.x YUM Repo] *********************************************************************************************************************************

changed: [192.168.56.144]

changed: [192.168.56.152]

TASK [filebeat : Update Repo cache and install Filebeat] *******************************************************************************************************************

fatal: [192.168.56.152]: FAILED! => {"changed": false, "failures": ["No package filebeat-8.5.2 available."], "msg": "Failed to install some of the specified packages", "rc": 1, "results": []}

fatal: [192.168.56.144]: FAILED! => {"changed": false, "failures": ["No package filebeat-8.5.2 available."], "msg": "Failed to install some of the specified packages", "rc": 1, "results": []}

PLAY RECAP *****************************************************************************************************************************************************************

192.168.56.142 : ok=5 changed=3 unreachable=0 failed=1 skipped=0 rescued=0 ignored=0

192.168.56.144 : ok=4 changed=2 unreachable=0 failed=1 skipped=2 rescued=0 ignored=0

192.168.56.152 : ok=4 changed=2 unreachable=0 failed=1 skipped=2 rescued=0 ignored=0

192.168.56.153 : ok=5 changed=3 unreachable=0 failed=1 skipped=0 rescued=0 ignored=0

Seems good. errors encountered is due to files that are not installed yet.

Let’s run the Ansible playbook to deploy Filebeat by omitting option -C in the command above.

ansible-playbook ~/ansible/main.yml --ask-become-pass

BECOME password:

PLAY [all] *****************************************************************************************************************************************************************

TASK [Gathering Facts] *****************************************************************************************************************************************************

ok: [192.168.56.153]

ok: [192.168.56.144]

ok: [192.168.56.152]

ok: [192.168.56.142]

TASK [filebeat : Debian/Ubuntu Filebeat Installation Task] *****************************************************************************************************************

skipping: [192.168.56.144]

skipping: [192.168.56.152]

included: /home/kifarunix/ansible/roles/filebeat/tasks/Debian.yml for 192.168.56.142, 192.168.56.153

TASK [filebeat : Install gnupg] ********************************************************************************************************************************************

changed: [192.168.56.153]

changed: [192.168.56.142]

TASK [filebeat : Install ELK APT Repo Signing Key] *************************************************************************************************************************

changed: [192.168.56.142]

changed: [192.168.56.153]

TASK [filebeat : Install ELK 8.x APT Repo] *********************************************************************************************************************************

changed: [192.168.56.153]

changed: [192.168.56.142]

TASK [filebeat : Update Repo cache and install Filebeat] *******************************************************************************************************************

changed: [192.168.56.153]

changed: [192.168.56.142]

TASK [filebeat : RedHat/Rocky/CentOS Agent Installation Task] **************************************************************************************************************

skipping: [192.168.56.142]

skipping: [192.168.56.153]

included: /home/kifarunix/ansible/roles/filebeat/tasks/Rhel.yml for 192.168.56.144, 192.168.56.152

TASK [filebeat : update-crypto-policies to import the key] *****************************************************************************************************************

changed: [192.168.56.144]

changed: [192.168.56.152]

TASK [filebeat : Install ELK YUM Repo Signing Key] *************************************************************************************************************************

changed: [192.168.56.144]

changed: [192.168.56.152]

TASK [filebeat : Install ELK 8.x YUM Repo] *********************************************************************************************************************************

changed: [192.168.56.144]

changed: [192.168.56.152]

TASK [filebeat : Update Repo cache and install Filebeat] *******************************************************************************************************************

changed: [192.168.56.152]

changed: [192.168.56.144]

TASK [filebeat : update-crypto-policies to default] ************************************************************************************************************************

changed: [192.168.56.144]

changed: [192.168.56.152]

TASK [filebeat : Enable Filebeat System Module] ****************************************************************************************************************************

changed: [192.168.56.153]

changed: [192.168.56.152]

changed: [192.168.56.144]

changed: [192.168.56.142]

TASK [filebeat : Backup existing Filebeat configuration file] **************************************************************************************************************

changed: [192.168.56.153]

changed: [192.168.56.144]

changed: [192.168.56.142]

changed: [192.168.56.152]

TASK [filebeat : Copy custom Filebeat configuration file] ******************************************************************************************************************

changed: [192.168.56.153]

changed: [192.168.56.142]

changed: [192.168.56.144]

changed: [192.168.56.152]

TASK [filebeat : Copy ELK CA Cert file] ************************************************************************************************************************************

ok: [192.168.56.142]

ok: [192.168.56.153]

ok: [192.168.56.144]

ok: [192.168.56.152]

TASK [filebeat : Update hosts file with ELK server address] ****************************************************************************************************************

changed: [192.168.56.142]

changed: [192.168.56.153]

ok: [192.168.56.144]

ok: [192.168.56.152]

TASK [filebeat : Start and enable Filebeat Service] ************************************************************************************************************************

changed: [192.168.56.153]

ok: [192.168.56.144]

changed: [192.168.56.142]

changed: [192.168.56.152]

PLAY RECAP *****************************************************************************************************************************************************************

192.168.56.142 : ok=12 changed=9 unreachable=0 failed=0 skipped=1 rescued=0 ignored=0

192.168.56.144 : ok=13 changed=8 unreachable=0 failed=0 skipped=1 rescued=0 ignored=0

192.168.56.152 : ok=13 changed=9 unreachable=0 failed=0 skipped=1 rescued=0 ignored=0

192.168.56.153 : ok=12 changed=9 unreachable=0 failed=0 skipped=1 rescued=0 ignored=0

Filebeat should now be installed and running on all the nodes;

Confirm if status of filebeat;

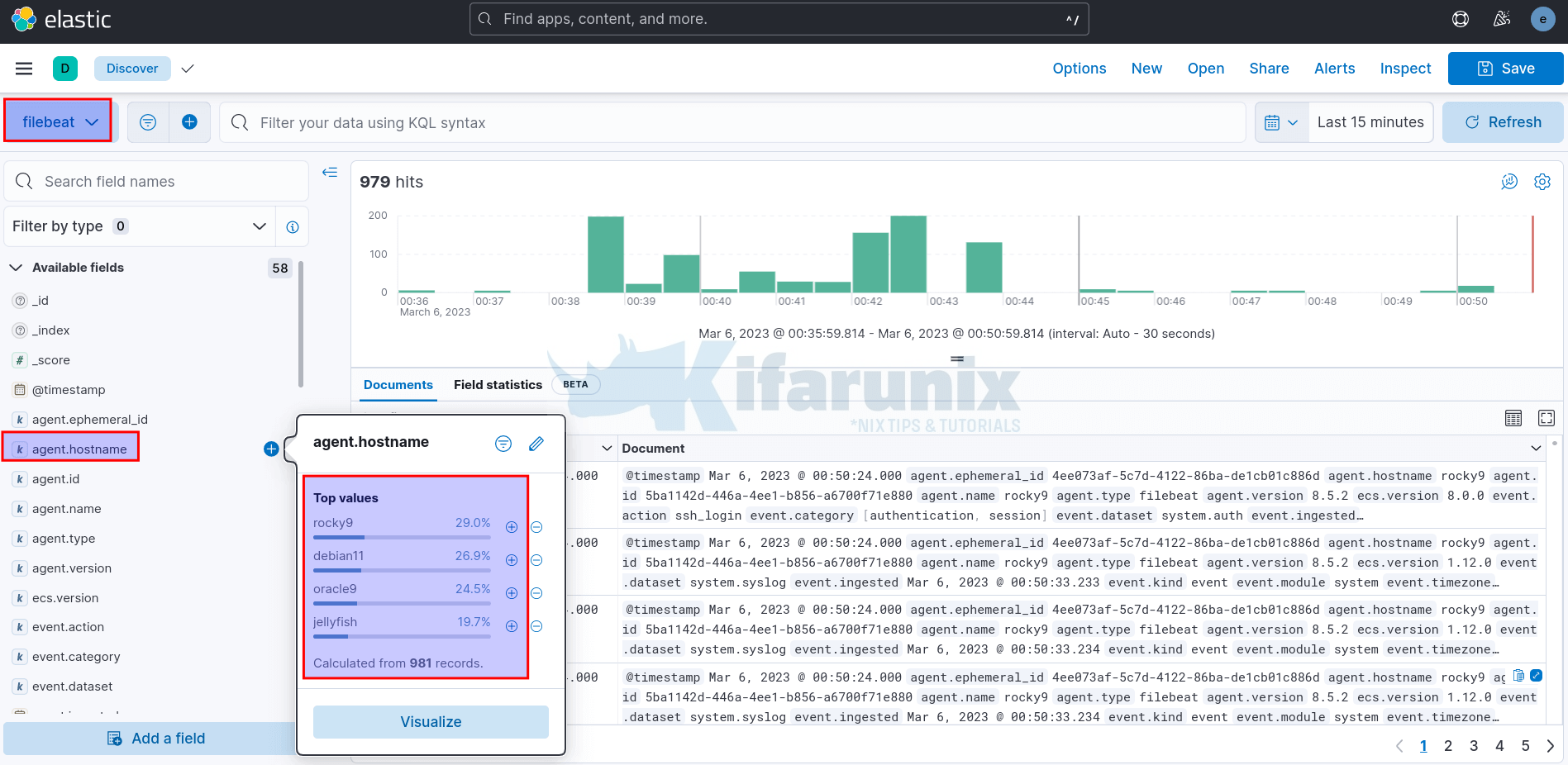

ansible -m shell -a "systemctl status filebeat" --ask-become-pass -u kifadmin allLogin to Kibana dashboard and confirm if events are being received from the nodes;

Feel free to modify the playbooks as you so wish.